Claude for SEO vs AEO: Why the Same Tool Produces Opposite Results

Claude for SEO vs AEO produces opposite results. Prompt stacks generate generic output. Real strategy gets brands cited by AI search. Here's what separates the two.

TL;DR

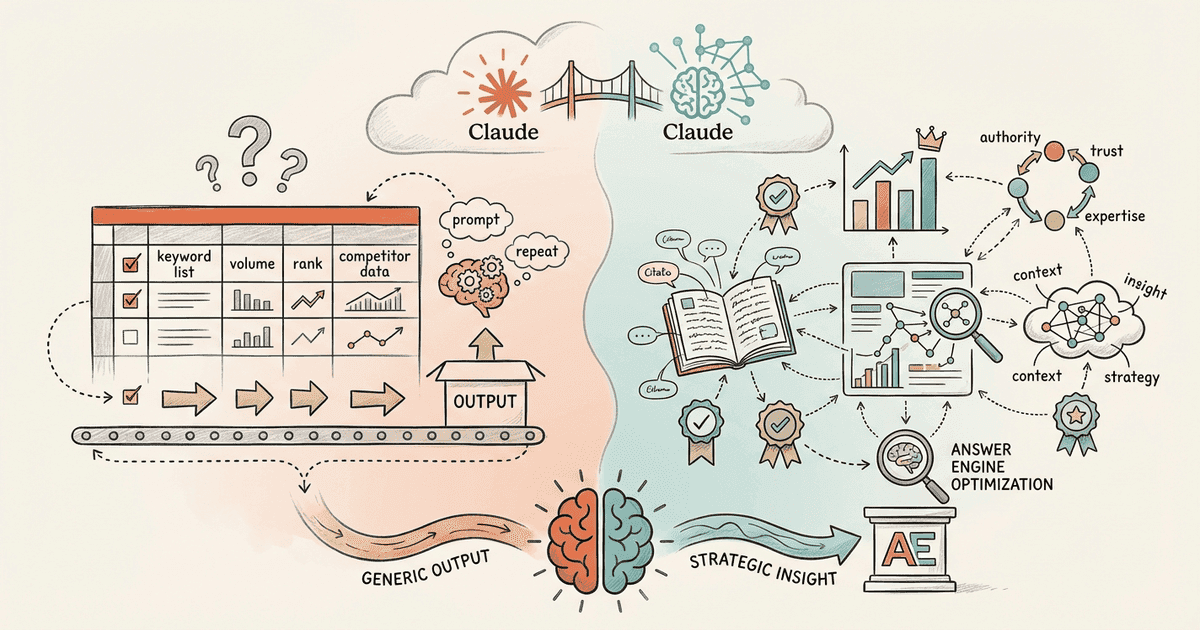

Claude for SEO and Claude for AEO produce opposite results because the input determines the output. Prompt stacks generate keyword spreadsheets and GBP audits that traditional search engines value. Strategic frameworks loaded with original data and competitive intelligence produce content that ChatGPT, Perplexity, and AI Overviews actually cite. Same tool, different strategy, wildly different outcomes.

Claude prompt stacks went viral promising $10K agency output for free. Thousands of businesses adopted them. The result? More generic content that answer engines will never cite.

The same AI tool that generates keyword research reports can also build topical authority that ChatGPT and Perplexity actively reference. The difference isn't the tool. It's the human strategy behind the prompt. One approach works for traditional rankings. The other works for AI search visibility.

This article breaks down why Claude for SEO vs AEO produces fundamentally different results, what data shows AI engines actually cite, and how to use Claude for content that gets recommended.

What Do Claude SEO Prompt Stacks Actually Produce?

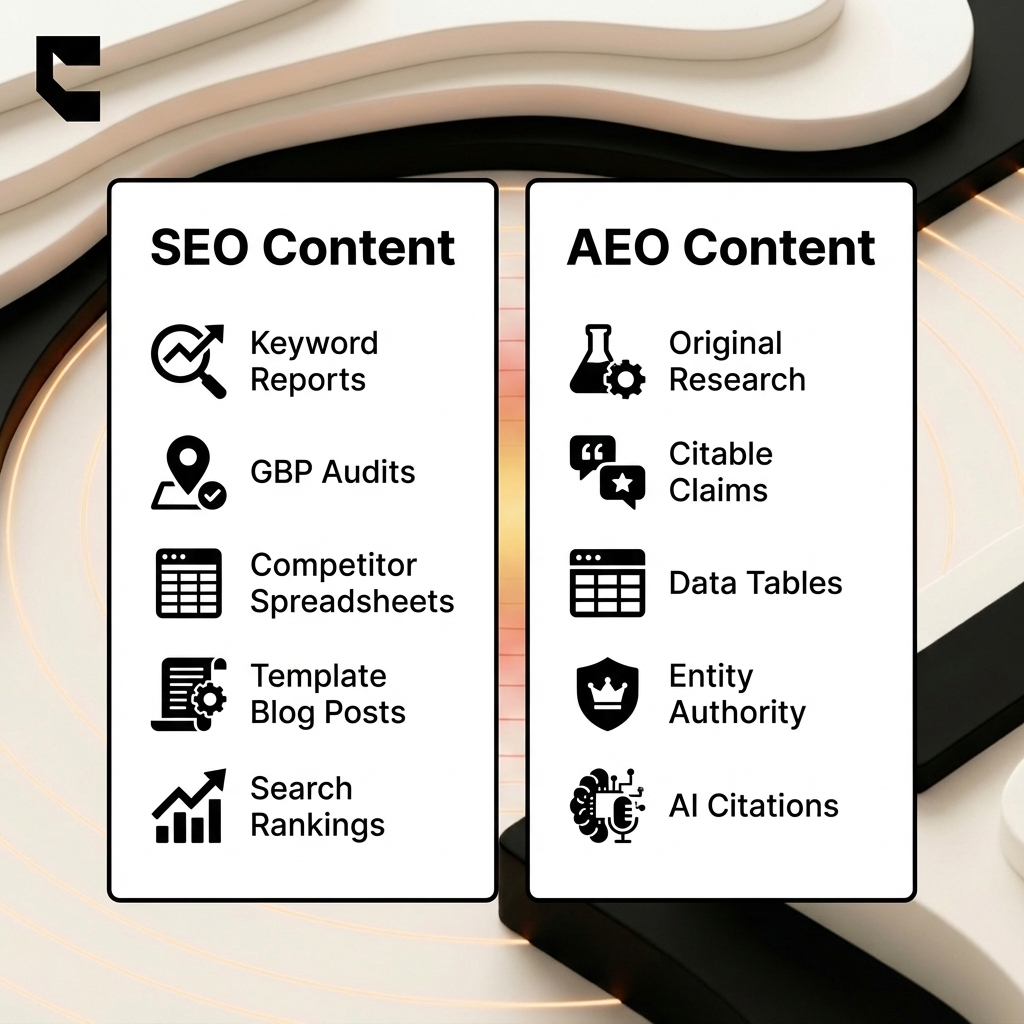

Claude SEO prompt stacks generate structured outputs like keyword spreadsheets, GBP category audits, competitor analyses, and content checklists. These automate traditional SEO workflows, not AI search visibility.

The viral stacks cover seven distinct SEO categories: competitive analysis, technical audits, keyword research, content optimization, local SEO, backlinks, and reporting. You can build 30-day Google Business Profile calendars, auto-generate schema JSON-LD, and map competitor keyword structures across hundreds of pages.

The speed is real

Claude Cowork completes a full competitive analysis in 12 minutes. That same task takes traditional agencies three to four hours. The tool delivers genuine time savings. The spreadsheets look professional. The data is organized and actionable for traditional search optimization.

But all of these tasks optimize for Google's legacy crawl-index-rank model. Speed can't fix a flawed target. A perfectly executed keyword spreadsheet means nothing to Perplexity. So what happens when AI search engines evaluate this content?

Why Does AI-Generated SEO Content Fail in AI Search?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

AI search engines cite content that demonstrates verifiable expertise and original data, not content that reorganizes information already available from dozens of other sources.

ChatGPT sources 7.8% of its citations from Wikipedia. It prizes encyclopedic, factual, reference-grade content. Wikipedia earns those citations through structured attribution and comprehensive, verifiable detail. Not through keyword optimization.

The data gap is the real problem

Content with original statistics and authoritative citations earns 30-40% higher AI visibility. Prompt stack outputs reshape existing competitor data. They shuffle paragraphs from the top ten search results. They rarely introduce original proof points or proprietary research. AI platforms identify this lack of novelty and refuse to cite it.

Here's what makes this worse: 44% of ChatGPT citations come from the first 30% of a page, and cited text averages 20.6% proper nouns. AI favors "anchored" content packed with specific brands, tools, and standards. Prompt stacks produce generic frameworks. AI cites specificity.

Reference-grade content gets cited at 3-5x the rate of standard content. That means chunked sections, quotable facts, correct schema markup, and every claim linked to a verifiable source.

| Feature | SEO Content | AEO Content |

|---|---|---|

| Purpose | Rank in traditional search results | Earn citations in AI engine answers |

| Data Type | Reorganized competitor information | Original data, statistics, and surveys |

| Structure | Keyword-focused headings and narrative | Skimmable capsules, tables, clear claims |

| Voice | Conversational, engaging, often generic | Objective, factual, highly specific |

| Update Cycle | Periodic refreshes for ranking decay | Continuous adjustments for citation volatility |

| Success Metric | Organic traffic and SERP positions | Mention frequency and AI citation rate |

The content that gets cited isn't just better. It's structurally different. Which raises the real question.

What Makes AEO Content Structurally Different from SEO Content?

AEO content requires structured expertise with citable claims, entity authority signals, and original data that AI engines can verify and attribute. Not keyword-optimized text targeting crawlers.

Answer engine optimization demands explicit, skimmable information: clear definitions upfront, supporting context right after, and schema validation across all data points. Successful AEO content features data tables, proper noun density, and direct factual answers in every section.

Query complexity has outgrown keyword matching

Shoppers no longer search "best mountain bike." They ask for a "lightweight carbon-fiber mountain bike under 25 lbs with hydraulic disc brakes." Keyword-stuffed content fails these queries entirely. Building E-E-A-T for AI search demands depth that no template can fake.

Answer engines track different success metrics altogether. They measure mention frequency, calculate sentiment accuracy, and ignore traditional rankings. If you optimize for organic traffic alone, even perfect execution misses the AI citation target.

You need to learn how to get cited by Perplexity and similar platforms. Understanding the structure is step one. The harder question is where the inputs come from.

How Do You Use Claude for AEO Instead of SEO?

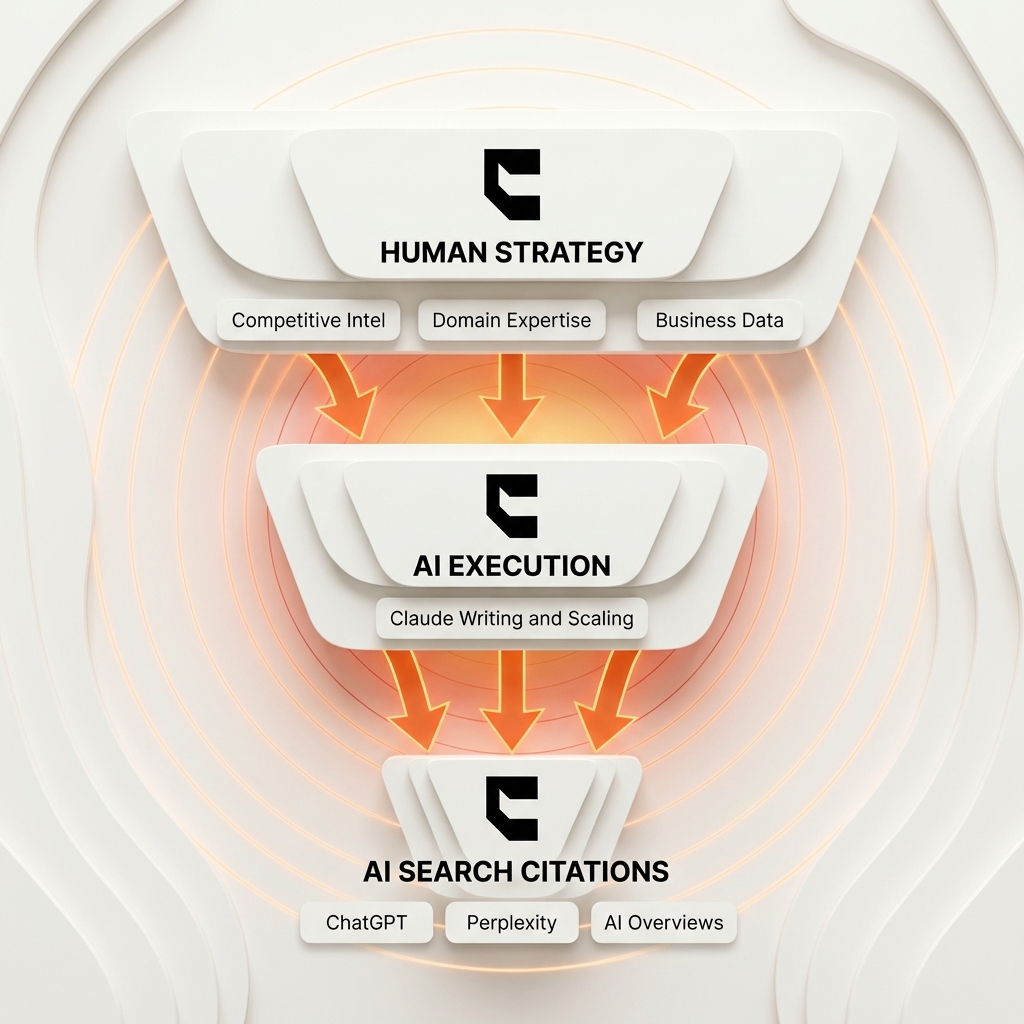

The difference between Claude for SEO and Claude for AEO is the input. Prompt templates produce generic outputs. Strategic frameworks with real business data produce citable expertise.

Standard SEO templates ask Claude to "generate a keyword research spreadsheet for [niche]" or "audit this GBP listing." These inputs yield predictable, surface-level outputs. The model regurgitates aggregate data from its training set.

AEO inputs require original fuel

AEO frameworks look different from the start. We load them with competitive intelligence gathered from live market analysis. We feed in proprietary survey results and raw customer conversation transcripts from sales calls. We add domain expertise directly from subject matter experts who've spent years in the space.

The technology stays identical. The strategy layer creates the outcome. We consistently see a three to five times citation advantage when pairing Claude with genuine generative engine optimization strategy versus prompt templates alone.

The financial case keeps growing. Traffic from AI sources surged 4,700% year-over-year according to Adobe Analytics. Answer engines represent the next decade of digital discovery. This is why the "Claude replaces agencies" narrative misses the point.

Why Can't Prompt Stacks Replace Strategy?

Prompt stacks automate execution without strategy. AI search engines cite content built on real expertise, competitive intelligence, and original data that no template can generate.

Even well-written content struggles without proper technical foundations. Over 73% of websites have crawlability issues that block AI bots from reading their pages entirely. Distribution requires strategic thinking beyond content generation.

Citation volatility demands ongoing work

Between 40% and 60% of AI citations change every month. Maintaining visibility requires constant strategic adjustments and fresh data inputs. Prompt stacks are one-shot exercises. Real authority demands continuous investment.

The winning formula pairs human strategy (competitive analysis, brand positioning, domain expertise) with AI execution for scale and speed. We built our model at Cintra around this exact approach: dedicated human strategists set direction while AI agents handle volume.

We manage the continuous AI visibility playbook for our partners. We map domain expertise. We build strategic frameworks from real business data. Claude scales the output.

Frequently Asked Questions About Claude for SEO vs AEO

Common questions about using Claude for search optimization versus answer engine optimization.

Can Claude write content that gets cited by ChatGPT?

Yes, but only when the content is built on original data and strategic frameworks. Prompt templates that reformat existing information won't earn citations.

Are SEO prompt stacks useless?

No. They automate legitimate SEO tasks like keyword research and technical audits. They just don't produce content that AI search engines cite.

What's the difference between GEO and AEO?

GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization) are closely related. Both optimize content for AI search citation rather than traditional search rankings.

How do you measure AEO success vs SEO success?

SEO measures rankings and organic traffic. AEO measures mention frequency, citation rate, and sentiment accuracy across ChatGPT, Perplexity, and AI Overviews.

Does AI-optimized content still rank on Google?

Yes. AEO content with structured data, expertise signals, and original research typically ranks well on Google too. The quality signals overlap significantly.

Conclusion

The shift from keyword search to answer engines changes content strategy at the foundation level. The tools matter far less than the original data you feed them.

- Claude works for both SEO and AEO. The difference in output quality depends entirely on inputs and strategic direction.

- AI search engines cite expertise, original data, and structured authority. They ignore reformatted competitor content from templates.

- Prompt stacks automate execution. Strategy determines whether that execution produces anything worth citing.

- The brands winning AI search pair human strategy with AI execution at scale.

Audit one piece of content you recently created with AI. Does it contain original data? Citable claims? Structured expertise? If not, that's your starting gap. You've automated the execution. Now you need the strategy.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

Google AI Mode Optimization: How to Get Cited (2026 Guide)

Google AI Mode optimization needs a different approach than AI Overviews. Only 13.7% of sources overlap. Learn…

How to Get Cited by Grok: The xAI Platform Optimization Guide

Learn how to get cited by Grok with a 6-step framework covering the X co-citation mechanic, robots.txt setup,…

AI Search Optimization Ecommerce: The Proven 5-Step Playbook for Getting Products Best Recommended by ChatGPT

AI search optimization ecommerce brands need is here: ChatGPT Shopping, Perplexity, and AI visibility strategi…