Video Content for AI Visibility: The Data-Driven Playbook

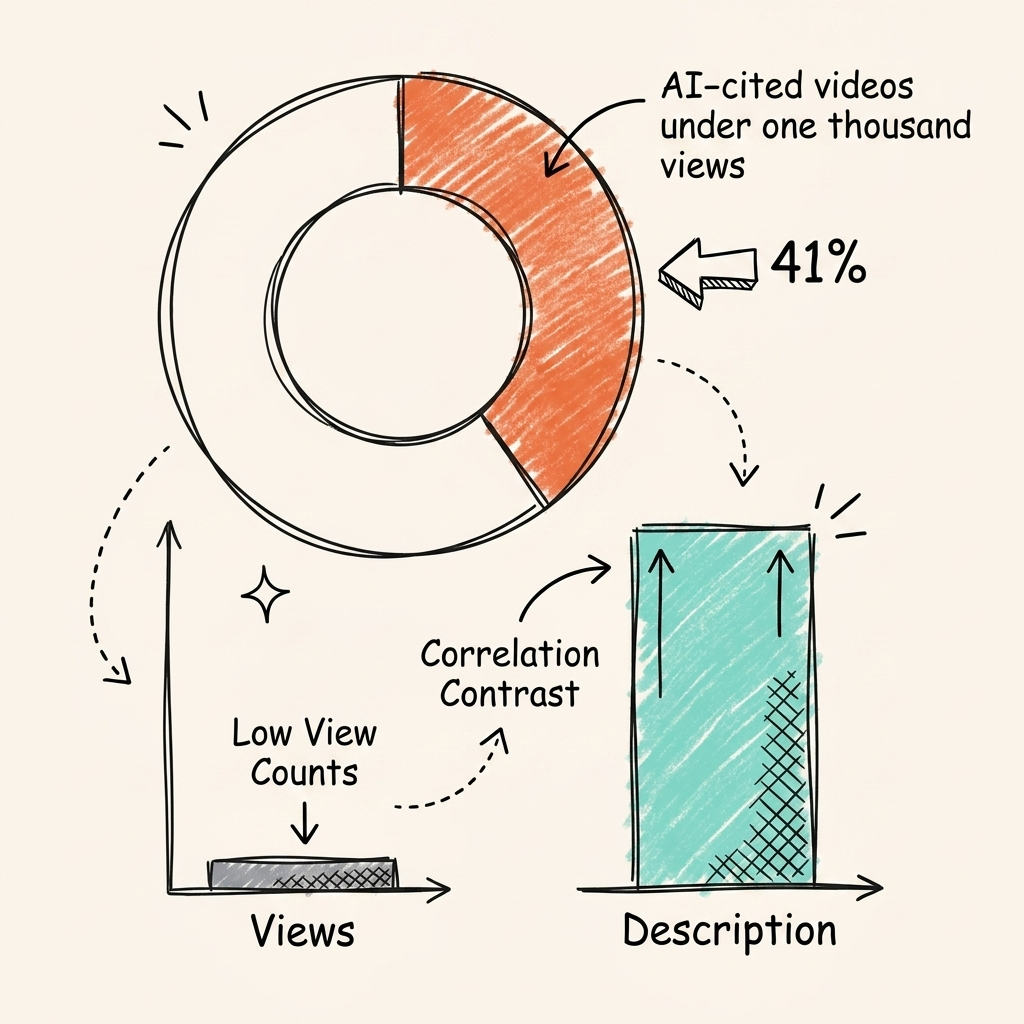

41% of AI-cited videos have fewer than 1,000 views. Learn why video content for AI visibility depends on structure – not popularity – and how to optimize for citations across every AI platform.

TL;DR

Video content for AI visibility depends entirely on structure, not popularity. YouTube is the #1 cited domain in AI search, 29.5% of all Google AI Overview citations. But the videos getting cited aren't the popular ones. 41% of AI-cited videos have fewer than 1,000 views (r = -0.03 between views and citations). What actually drives citations? Transcripts, chapter markers, descriptions, and schema markup. This playbook covers the data, the platform-by-platform breakdown, and seven structural tactics for optimizing video content for AI visibility.

41% of videos cited by AI engines have fewer than 1,000 views. The correlation between a video's popularity and its chance of being cited? Essentially zero. OtterlyAI's citation study puts the exact number at r = -0.03.

Most brands still optimize video for views. They chase subscriber counts. They obsess over audience retention graphs. Those metrics don't move the needle on AI citations. Meanwhile, YouTube now claims 29.5% of all Google AI Overview citations, making it the single most-cited domain across the entire internet.

We've seen this pattern play out across every channel. This playbook covers why AI engines prefer YouTube, how each major platform reads video content differently, and seven structural optimization tactics that earn citations regardless of channel size.

Why Is YouTube the Most-Cited Domain in AI Search?

YouTube accounts for 29.5% of all Google AI Overview citations and is cited 200 times more than any other video platform by AI search engines.

This isn't a niche opportunity. BrightEdge research confirms YouTube commands a 29.5% citation share within AI Overviews. It outperforms Wikipedia, Reddit, and every major publisher. You can't achieve real AI visibility without addressing YouTube.

The growth curve is accelerating. YouTube's citation share jumped from 18.9% to 39.2% between August and December 2025. This signals a structural shift in how Google serves answers, and it's leaning heavily on video to satisfy queries.

Search intent has changed too. People don't just ask AI factual questions anymore. They ask how to do things. OtterlyAI data shows citations for how-to videos jumped 651% in a single year. AI engines now map instructional prompts directly to video content.

YouTube dominates because it's a structured repository of instructional data. But that raises a question: how do AI engines, which can't actually watch video, decide which videos to cite?

How Do AI Engines Actually Read Video Content?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

AI engines don't watch video. They read transcripts, descriptions, and schema markup, then cite videos whose text metadata directly answers the user's question.

Large language models process text tokens, not raw video pixels. To understand a video, AI engines extract its text-based metadata: the transcript, the description, the chapter markers. This discrete tokenization means AI evaluates video almost exactly like it evaluates a blog post.

That's what explains the structure-beats-popularity phenomenon. 41% of all AI-cited videos have fewer than 1,000 views. The median cited channel holds fewer than 41 published videos. A video with 500 views and a well-structured transcript will outperform a video with two million views and zero metadata.

The OtterlyAI study makes this concrete with correlation data:

- Description length vs. citations: r = 0.31, the strongest positive signal

- View count vs. citations: r = -0.03, statistically irrelevant

- Subscriber count vs. citations: r = -0.03, equally irrelevant

What matters: transcript quality, description depth, structured metadata. What doesn't: production value, view count, subscriber count. We build answer engine optimization strategies around exactly this reality. You win citations by giving AI engines well-formatted text tokens, not polished visuals.

Each AI platform processes this metadata differently. Here's how the four major platforms handle YouTube content.

How Does Each AI Platform Cite YouTube Differently?

Google AI Overviews, Perplexity, ChatGPT, and Gemini each process YouTube content differently, from transcript extraction to timestamp linking to native multimodal analysis.

You can't treat all AI search engines as a single system. Each platform uses different parsing mechanics.

| Platform | YouTube Citation Share | How It Uses Video | Key Signal |

|---|---|---|---|

| Google AI Overviews | 29.5% | Transcripts + chapter markers | Structured chapters map to search queries |

| Perplexity | 38.7% of YouTube AI citations | Timestamp-linked citations | Deep transcript parsing with time references |

| ChatGPT | 0.2–4.4% (growing 100% week-over-week) | Transcript-dependent | Rapidly expanding from low base |

| Gemini | Native multimodal | 300 tokens/sec processing | Can process audio and visual natively |

Perplexity leads the field in YouTube integration. It drives 38.7% of YouTube's AI-generated referral traffic, the single largest driver of video citations. If you want to get cited by Perplexity, you need to format your videos for its parsing logic. It favors timestamp-linked citations and deep transcript parsing.

ChatGPT currently holds a smaller share. But its video citation rate is growing 100% week-over-week. OpenAI is expanding its media parsing capabilities fast. Early movers will capture significant share as ChatGPT integrates video more deeply.

Google AI Overviews prioritize chapter markers. They look for structural divisions within the video that map directly to the user's prompt.

The Video AEO Playbook: 7 Tactics That Drive AI Citations

Video AEO requires optimizing seven structural elements: transcripts, chapters, descriptions, schema, question-format titles, long-form format, and pacing.

We run these exact tactics for our clients. Each step transforms a standard video file into a citable data object.

| Optimization Tactic | Implementation Target | Primary AI Benefit |

|---|---|---|

| Transcript Quality | Clean, jargon-free manual transcripts | Provides accurate text tokens for LLM parsing |

| Chapter Markers | 5–10 question-based timestamps | Maps video segments to specific user queries |

| Expanded Descriptions | 300+ word summaries with takeaways | Offers dense metadata layer (r = 0.31 correlation) |

| VideoObject Schema | Full structured data on page embeds | Defines video contents explicitly for crawlers |

| Question Titles | Natural language "How to" formats | Matches conversational prompts users give AI |

| Long-Form Duration | 10–20 minute total run time | Hits the 32.1% citation sweet spot |

| Controlled Pacing | Deliberate speech and clear visuals | Increases clarity for multimodal analysis |

Transcript quality over production value

AI reads the transcript, not the video. It evaluates your topical authority based entirely on those text tokens. Auto-captions routinely fail, they misspell industry jargon and miss critical context. Upload a clean transcript instead. Clear speech and natural pacing ensure the text output is readable. You don't need a studio budget. You need clear content that translates well into text.

Chapter markers as H2 tags

Chapters are the video equivalent of H2 headings. 78% of all cited videos use timestamp markers. AI engines use these markers to understand structure, then jump directly to the relevant chapter to extract an answer. Start with 0:00 and use descriptive, question-based titles for every subsequent chapter.

Description as metadata layer

Your video description functions as a critical metadata layer. Description length holds the strongest positive correlation with AI citations at r = 0.31. A two-sentence summary won't cut it. Write descriptions exceeding 300 words: summarize key answers, include a bulleted list of takeaways, and provide detailed context that surrounds the video link with dense text tokens.

VideoObject schema markup

You need to tell AI crawlers explicitly what your video covers. VideoObject schema defines the video's properties, name, description, upload date, and transcript, as structured data embedded wherever the video lives on your site. We mandate schema markup for AI across all client assets for exactly this reason.

Question-format titles matching AI prompts

Your titles should mirror how users actually talk to AI. Traditional SEO titles stack keywords. AI prompts use natural language, "How do I fix X" or "What's the difference between Y and Z." Match this behavior in your titles. The direct alignment significantly increases citation probability.

Long-form format (10–20 minutes)

Short content lacks the depth required for complex answers. 32.1% of all AI citations go to videos in the 10-to-20-minute range. A 15-minute video provides enough runtime for detailed chapter divisions and a comprehensive transcript. A two-minute video rarely contains enough text tokens to establish topical authority.

Slow pacing for AI frame sampling

Emerging multimodal models analyze visual frames alongside audio. Slower pacing gives AI more tokens per concept. Rapid cuts and fast talking reduce parsing clarity. Deliberate pacing aligns audio tokens with visual tokens, increasing overall extraction quality. This is still an emerging practice, but the early data supports it.

These tactics work regardless of channel size, which brings us to the most counterintuitive finding in the data.

Can Small YouTube Channels Get Cited by AI?

Yes. 41% of AI-cited videos have fewer than 1,000 views, and the median cited channel has published fewer than 41 videos.

The data challenges the biggest assumption in video marketing. You don't need a large audience to win AI citations. 41% of cited videos sit below the 1,000-view mark. The median cited channel operates with fewer than 41 total videos. Subscriber count offers zero advantage, the correlation is r = -0.03.

Small channels win through niche expertise. They focus on specific technical problems. They provide direct, clear answers. Most importantly, they structure their metadata correctly. AI engines evaluate topical authority in text content based on relevance and structure. The exact same principle applies to video.

A channel with 50 subscribers and properly formatted chapter markers will outperform a channel with 5 million subscribers and zero metadata. The AI engine looks for the most direct answer to the user's prompt. It ignores vanity metrics entirely.

The biggest opportunity? Combining video with written content to earn citations across multiple platforms at once.

How Do You Build a Compound Video Citation Strategy?

Pair each YouTube video with a transcript-based blog post, schema markup linking both, and hub-and-spoke distribution to earn citations across four AI platforms simultaneously.

We use a compound approach. One piece of video content powers multiple citation-earning assets. Pair every YouTube video with a blog post built from its transcript. The video earns citations natively on YouTube. The blog post earns web-based citations. Both assets reinforce your topical authority on the same subject.

Bridge them with schema markup. The VideoObject schema embeds the YouTube video as a structured data source on your blog post. The AI engine crawls the post, reads the optimized text, and registers the video as supporting evidence.

This hub-and-spoke distribution model maximizes coverage. The central video supports the blog post, short clips, and transcript snippets. Each touchpoint operates independently to build AI visibility. Track the outcomes, we rely on strict protocols to measure AI visibility across platforms. Monitor video citations per platform and share of voice changes after optimization.

Frequently Asked Questions About Video Content for AI Visibility

These are the most common questions we hear from brands exploring video as an AI visibility channel.

Do YouTube Shorts Get Cited by AI?

Shorts account for just 5.7% of AI video citations. Long-form content in the 10-to-20-minute range earns the vast majority of citations. Shorts lack the transcript depth and chapter data AI engines need to validate topical authority.

Does View Count Affect AI Citations?

No. The correlation between views and AI citations is r = -0.03. AI engines evaluate transcript quality, chapter formatting, and metadata structure. They don't analyze popularity metrics. A well-structured low-view video will beat a poorly structured viral video every time.

How Long Should Videos Be for AI Visibility?

The citation sweet spot is 10 to 20 minutes. Videos in this range account for 32.1% of all AI citations. This length offers enough depth for structured transcripts and detailed chapter markers without diluting the core topic.

Can Small Channels Get Cited by AI Engines?

Yes. 41% of AI-cited videos have fewer than 1,000 views. Consistent niche expertise paired with structured metadata outperforms large channels posting unstructured content. Your subscriber count is invisible to the AI parsing engine.

Conclusion

Optimizing video content for AI visibility isn't about chasing engagement metrics. It requires the same structural discipline we apply to written AEO, just translated to a different medium.

- YouTube is the #1 cited domain in AI search with a 29.5% share. Structure, not views, determines which videos get cited.

- Video AEO mirrors written AEO. Transcripts, chapter markers, expanded descriptions, and schema markup are the optimization levers.

- Small channels with structured content outperform large channels. AI doesn't care about your subscriber count.

Start today: audit your existing YouTube videos. Add chapter markers to your highest-value content. Expand descriptions to 300+ words. Embed VideoObject schema on every page hosting a video.

We build integrated AI visibility strategies that span written content, video, and every platform where AI recommends brands. See our pricing →

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

Organic Traffic Decline 2026: Why It's Dropping and How to Recover

Organic traffic decline 2026 is real. AI Overviews cut clicks 38% on triggered queries. Here's what's causing…

AI Visibility Reporting: What to Track, Who to Tell, and How to Prove ROI

AI visibility reporting requires different metrics and formats than SEO reports. Learn the five core metrics,…

AI Visibility Competitive Analysis: How to Find and Exploit Gaps in 2026

AI visibility competitive analysis across ChatGPT, Perplexity, and 5 other engines. Framework for tracking com…