Answer Engine Optimization: What It Is and How to Get Cited

Answer engine optimization (AEO) gets your brand cited by ChatGPT, Perplexity, and AI Overviews. Learn the 6 citation signals, platform differences, and a real measurement framework.

TL;DR

- Answer engine optimization dictates whether AI recommends your brand or your competitors.

- Different platforms require distinct approaches since ChatGPT uses Bing while Perplexity relies on real-time web crawls.

- Six core citation signals drive visibility including content authority, entity clarity, and schema markup.

- Measuring AEO success involves tracking citation rates, share of voice, AI referral traffic, and conversion rates.

- AI search referrals convert at 23x the rate of traditional organic traffic.

- Content must be fresh. ChatGPT pulls 95% of its citations from pages published within the last 10 months.

Ask ChatGPT to recommend the best product in your category. If you aren't in the answer, you're losing revenue to competitors who are.

Traditional search volume will drop 25% by 2026, according to Gartner. A 2025 Conductor survey shows 70% of organizations believe AEO will impact strategy within 1-3 years. For all the key numbers in one place, the AI search statistics page compiles 30+ verified data points on adoption, conversions, and citation sources. Most brands still have zero visibility into whether AI recommends them or their competitors.

We do answer engine optimization for a living. We've optimized AI visibility for 20+ brands and tracked what actually moves citation rates. This guide covers the 6 citation signals, platform-by-platform breakdown, and a measurement framework built from real campaign data.

What Is Answer Engine Optimization?

Answer engine optimization is the practice of structuring content so AI search engines like ChatGPT, Perplexity, and Google AI Overviews cite and recommend your brand in their responses.

AEO focuses on AI-generated answers rather than traditional search rankings. The goal is citation and recommendation, not ranking position. You want the model to pull your data and link to your site when answering a user query. This requires specific formatting and factual density. Traditional search optimization focused on keywords. Answer engine optimization focuses on providing clear, direct answers to specific questions.

The terminology gets confusing across marketing teams. SEO aims to rank pages in traditional search results. Generative engine optimization (GEO) aims to shape how AI models synthesize information. AEO specifically targets being cited and recommended when users ask questions. In practice, these overlap heavily. AEO serves as the industry practitioner term. GEO operates as the academic equivalent. eMarketer notes the terms function interchangeably in practice. Both disciplines target the exact same outcome.

AEO matters right now. ChatGPT boasts 800M+ weekly users. Users get direct answers and follow the cited links with intense commercial intent. They skip the research phase and jump straight to the evaluation phase. If AI engines omit your brand, you miss these high-intent buyers entirely. The buying journey compresses from hours of reading blogs to seconds of reading a synthesized answer. For B2B specifically, half of buyers now start vendor research in AI chatbots, the AI search for B2B guide covers the full multi-platform enterprise strategy.

Understanding what AEO is matters less than understanding how it works under the hood.

How Do Answer Engines Actually Work?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

Answer engines use retrieval-augmented generation to search the web, select relevant sources, and synthesize responses that cite the most authoritative content.

The pipeline operates in three distinct stages. First comes retrieval. The model's search layer queries the web and pulls candidate sources. Bing powers ChatGPT. Google runs Gemini. Perplexity relies on its own index. Second is augmentation. Context from these retrieved sources gets injected directly into the prompt alongside the user's question. Finally, generation occurs. The large language model synthesizes an answer. It decides what to cite, recommend, or ignore.

Think of it as a research assistant who searches, reads the top sources, then writes a synthesis with footnotes. This assistant evaluates the fetched pages in milliseconds. It looks for factual density and direct answers. It compares your page against competitors to determine which facts are most reliable.

Citation, recommendation, and mention represent different outcomes. A citation means your source is linked with a footnote. This holds the highest value and drives immediate clicks. A recommendation means the brand is named without a source link. A mention is a passing reference. Nobody in the search engine results page makes these distinctions. Understanding them gives you a real competitive edge. A mention builds brand awareness, but a citation drives revenue.

SEO remains the foundation. Semrush and Seer Interactive data shows 87% of ChatGPT citations come from Bing's top 10 results. AEO builds on top of it. You need traditional visibility to feed the retrieval layer. Then you need AEO to win the generation layer. If you fail at retrieval, the AI never sees your content. If you fail at generation, the AI reads your content but chooses not to cite it.

The retrieval layer is platform-specific, and that's where most AEO advice falls short.

How Does Each AI Platform Handle Citations Differently?

Each AI platform uses different data sources, citation formats, and ranking signals to determine which content gets cited in answers.

| Platform | Primary Data Source | Citation Style | Freshness Weight | Schema Impact |

|---|---|---|---|---|

| ChatGPT | Bing search index | Numbered footnotes with URLs | High (favors <10 month content) | Moderate |

| Perplexity | Own index + web crawl | Inline numbered citations | Very high (real-time) | Low |

| Google AI Overviews | Google search index | Expandable source cards | Moderate | High |

| Gemini | Google search index | Inline links | Moderate | High |

| Claude | Training data + limited web | Rarely cites URLs | Low (training cutoff) | Minimal |

ChatGPT relies on Bing. This means traditional search visibility dictates your baseline. If you want to master this environment, read our guide on how to get recommended by ChatGPT. It favors content published within the last year and prioritizes comprehensive guides. You must rank in Bing's top ten to reliably enter ChatGPT's retrieval phase.

Perplexity favors freshness and real-time relevance. It acts like an immediate research tool. To capture this audience, study how to get cited by Perplexity. Perplexity crawls the web constantly, looking for the most recent data points and news updates. It biases towards news outlets and real-time data feeds.

Google AI Overviews lean on structured data. Schema gives you an edge here. Google wants to parse your page instantly without wasting compute power. If your data is organized into clear tables and lists, AI Overviews will pull it straight into the search results page.

Gemini functions similarly to AI Overviews but provides inline links. It integrates with Google Workspace tools. Claude stands as the exception. It uses primarily training data and rarely cites URLs. You optimize for Claude through broad brand presence before its knowledge cutoff date.

Knowing the platforms is step one. The actual signals that determine who gets cited are step two.

What Are the 6 Signals That Determine Who Gets Cited?

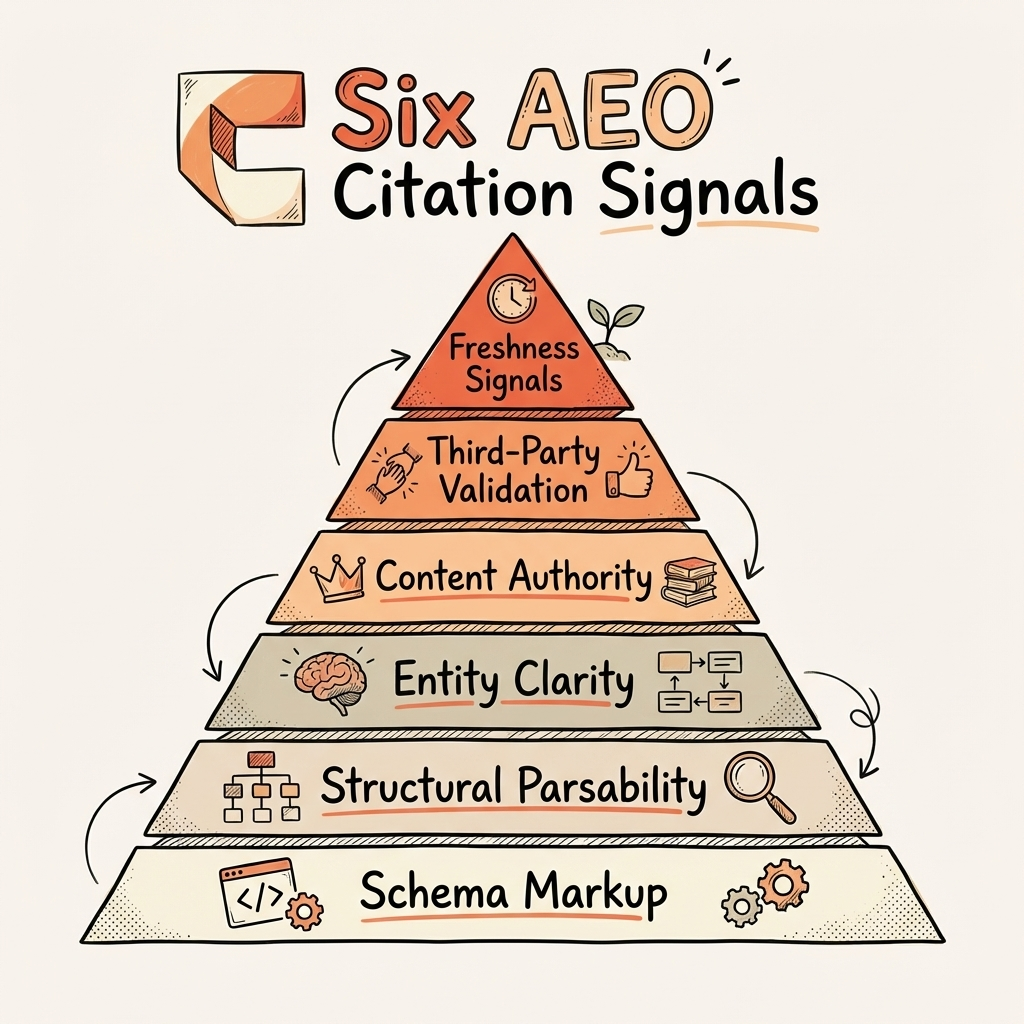

Six core signals consistently determine whether AI models will cite your content: authority, clarity, parsability, freshness, validation, and schema markup.

-

Content Authority Depth, specificity, and original data matter. AI models prefer primary sources over aggregators. A study from Princeton University found that adding statistics improves AI visibility by up to 40%. You must provide unique value. Do not repeat what everyone else says. Publish proprietary data. Write from direct experience. The machine identifies consensus and then looks for the most authoritative source of that consensus.

-

Entity Clarity Your brand, products, and people must be unambiguously identifiable across the web. If AI cannot distinguish your brand from competitors with similar names, it won't recommend you. This connects to E-E-A-T for AI search. Establish clear digital footprints. Use consistent naming conventions across all digital properties. Tie your brand to a specific knowledge graph entity.

-

Structural Parsability Clear headings, direct answers, and scannable formats win. Content structured for extraction gets cited more frequently than long-form prose. Use tight answer capsules beneath headings. Present data in markdown tables. Make the machine's job easy. When an LLM parses a page, it looks for semantic HTML tags. Properly formatted tables and lists signal dense, factual information that language models easily ingest.

-

Freshness Signals Recency matters more in AI search than traditional search. According to a Semrush AEO study, 95% of ChatGPT citations come from content published within the last 10 months. You cannot rely on five-year-old articles to dominate AI visibility. Update your core pages constantly. Change out old statistics for new ones. Show the models that your content actively reflects the current market.

-

Third-Party Validation Backlinks, reviews, Reddit mentions, and forum references feed the models. AI engines triangulate facts from multiple independent sources. A single mention on your own website holds little weight. Consistent mentions across authoritative domains build trust. If five independent reviews praise your software, the AI learns to recommend it. Digital PR remains a foundational piece of any answer engine optimization strategy. For the data on how backlinks for AI visibility work differently than traditional SEO links, the dedicated guide breaks down the research from Semrush and Ahrefs.

-

Schema Markup FAQPage, HowTo, and Article schema help AI parse structure. Impact varies by platform. It runs high for AI Overviews and lower for Perplexity. Proper markup ensures the retrieval layer categorizes your data accurately. Read our complete schema markup for AI visibility guide for implementation steps. Schema acts as a direct translation layer between your content and the retrieval engine.

We used this exact framework to grow Hamming.ai from 200 to 1,900 daily visitors (an 8.5x increase) in 12 weeks. We applied the same signals to UV Blocker, taking them from 0 to 38,000 clicks in 6 months. The full AI visibility ROI breakdown, including revenue attribution and cost analysis, documents these results in detail.

Having the signals is one thing. Measuring whether they work is the real challenge.

How Do You Measure Answer Engine Optimization Success?

Measure AEO success by tracking four metrics: citation rate, share of voice, AI referral traffic, and conversion rate.

First is citation rate. This measures the percentage of relevant prompts that cite your brand. A solid benchmark sits between 3% and 13% for established brands. We helped Keywords.am grow their citation rate from 3% to 13% in just two weeks. You track this by testing a core set of prompts daily and measuring presence.

Second is share of voice. Track how often you appear versus competitors in AI responses. You must measure this per platform. You might dominate Perplexity but vanish on ChatGPT. Assign weighted scores based on whether you receive a citation, recommendation, or simple mention.

Third is AI referral traffic. You track GA4 referrals from chat.openai.com, perplexity.ai, and google. This traffic segment is exploding. Frase and SparkToro data shows AI referral traffic is growing 527% YoY. Set up dedicated GA4 segments to isolate these specific referring domains.

Fourth is AI conversion rate. These users bring strong intent. Our internal brand data shows AI-referred visitors convert at 23x the rate of traditional organic traffic. They enter your site ready to buy. Build specific conversion goals for this traffic segment. Review our breakdown of the best AI visibility tools to find the right monitoring fit. For deep implementation details, read how to measure AI visibility.

The honest measurement problem remains a real hurdle. AI responses are non-deterministic. You use the exact same prompt and get different citations each time. The model's temperature dictates its randomness. No standardized attribution model for AI traffic exists yet. Most analytics tools still cannot distinguish Google AI Overview clicks from regular Google search clicks.

To complicate matters further, 40-60% of AI citations change monthly. This is hard. Anyone who says otherwise is selling monitoring software. The data fluctuates. Platforms update their models constantly. Tracking requires patience and technical precision. We track these metrics for every client monthly. See how we work to build reliable reporting.

Frequently Asked Questions About Answer Engine Optimization

AEO questions span platform strategy, competitive advantage, timeline, and cost considerations that marketing teams consistently raise.

Is AEO Different from GEO?

AEO and GEO describe the same discipline from different angles. AEO is the industry practitioner term. GEO is the academic term from Princeton researchers. The tactics overlap almost entirely. Read our generative engine optimization guide for the research perspective.

Does AEO Replace SEO?

No. 87% of ChatGPT citations come from pages already ranking in traditional search. Answer engine optimization builds on top of your existing search presence. It does not replace it. You need a baseline of authority before AI models will trust your content.

How Long Until AEO Results Show?

Most brands see measurable citation improvements within 2 to 6 weeks. The full traffic impact compounds over 3 to 6 months. Keywords.am reached a 13% citation rate in 2 weeks. Hamming.ai saw an 8.5x traffic increase in 12 weeks. Speed depends on your current technical foundation.

What Does AEO Cost?

AEO ranges from DIY using internal teams to agency partnerships at $2,000 to $4,000 per month for comprehensive optimization. The real cost is invisibility. Losing high-intent buyers to competitors costs far more than any monthly retainer. See our pricing for transparent service tiers.

Conclusion

Answer engine optimization determines whether AI recommends your brand alongside or instead of competitors in generated answers.

- Answer engine optimization dictates how brands get cited by AI. It serves as the practitioner term for what researchers call GEO.

- Each AI platform behaves differently. A one-size-fits-all approach fails. ChatGPT requires traditional search strength while Perplexity demands real-time freshness.

- Six core citation signals form a systematic, repeatable framework. You need authority, clarity, parsability, freshness, validation, and schema.

- Measurement works but remains imperfect. Honest, rigorous tracking beats pretending the attribution problem is fully solved.

Ask ChatGPT right now to recommend the best product in your category. If you aren't in the answer, that's your starting line. Every day you wait, competitors secure real estate in these AI responses.

We build AEO strategies for brands that want to be the answer, not an afterthought. See how we work.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

Google AI Mode Optimization: How to Get Cited (2026 Guide)

Google AI Mode optimization needs a different approach than AI Overviews. Only 13.7% of sources overlap. Learn…

How to Get Cited by Grok: The xAI Platform Optimization Guide

Learn how to get cited by Grok with a 6-step framework covering the X co-citation mechanic, robots.txt setup,…

AI Search Optimization Ecommerce: The Proven 5-Step Playbook for Getting Products Best Recommended by ChatGPT

AI search optimization ecommerce brands need is here: ChatGPT Shopping, Perplexity, and AI visibility strategi…