Content Quality Gap AI Search: What Gets Cited vs. What Prompt Stacks Produce

Content quality gap AI search data: AI engines cite original research, not reformatted competitor data. See what gets cited and what gets ignored.

TL;DR

Viral SEO prompt stacks produce content that fails 5 of 7 AI citation signals. AI search engines cite original research, expert framing, and structured depth. The gap isn't AI vs. human. It's strategy-less AI vs. strategy-driven AI.

A viral post promises Claude can replace a $10,000/month SEO agency. Thousands of businesses copy-paste these prompt stacks daily. The content quality gap AI search creates has never been wider.

When we tracked what ChatGPT and Perplexity actually cite across 680 million citations, not a single characteristic of prompt-stack content appeared on the list. Most marketers don't realize this gap exists.

59.6% of AI Overview citations come from URLs not even in the top 20 organic results. Ranking on Google no longer guarantees AI visibility. Here's what the data shows, what prompt stacks miss, and what it takes to cross the citation threshold.

What Do AI Search Engines Actually Cite?

AI search engines cite content with original data, sequential heading structures, schema markup, and quarterly freshness updates over pages optimized for keyword rankings alone.

Otterly.AI analyzed over one million data points to uncover what triggers an AI citation. The data reveals a clear preference for structured depth over superficial keyword placement:

- Sequential heading structures correlate with 2.8x higher citation likelihood

- 87% of cited pages use a single H1 tag

- 61% of cited pages implement 3+ schema types

- Nearly 80% include scannable lists that algorithms digest easily

- Pages not updated quarterly are 3x more likely to lose existing citations

Each Platform Cites Differently

Platform differences dictate execution strategy. Profound's citation pattern analysis shows sharp divergence across the three major AI search engines.

ChatGPT favors Wikipedia, which makes up 47.9% of its top 10 citations. It leans toward encyclopedic authority and established media. Perplexity takes a different approach, pulling 6.6% of its citations from Reddit and favoring community discourse and fresh, well-cited articles. Google AI Overviews show the strongest brand preference: 59.8% are direct brand citations with clickable links.

Content depth matters more than traditional SEO metrics. Sentence counts, word counts, and readability scores outweigh traffic and backlinks. And 85% of brand mentions originate from third-party pages, not owned domains. Building real AI visibility demands strategies that prioritize deep expertise over keyword density. Understanding these citation signals is the first step toward closing the gap between what you publish and what AI engines cite.

What Do SEO Prompt Stacks Actually Produce?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

SEO prompt stacks output reformatted competitor research, template-based GBP posts, generic review responses, and keyword-stuffed articles with zero original data or expert perspective.

Viral Claude Cowork threads and ChatGPT mega-prompts flood timelines daily. They promise to automate content creation entirely. We examined the outputs across multiple industries. The results share glaring, systemic flaws that directly harm AI visibility.

Typical Prompt Stack Outputs

- Competitor analysis reports that reformat publicly available data without adding new insights

- GBP post calendars built from rigid templates with generic descriptions

- Review response templates that are formulaic and brand-name-swappable

- "SEO-optimized" blog articles that are keyword-stuffed rewrites of top-ranking competitors

- Technical audit checklists scraped from existing, outdated tools

These outputs are genuinely useful for traditional SEO tasks. They fail completely at earning AI citations, and they widen the citation gap with every piece published.

The shared flaw is structural emptiness. Zero original data. No recognizable expert perspective. No first-party research. This directly contradicts the E-E-A-T for AI search criteria that AI search engines demand.

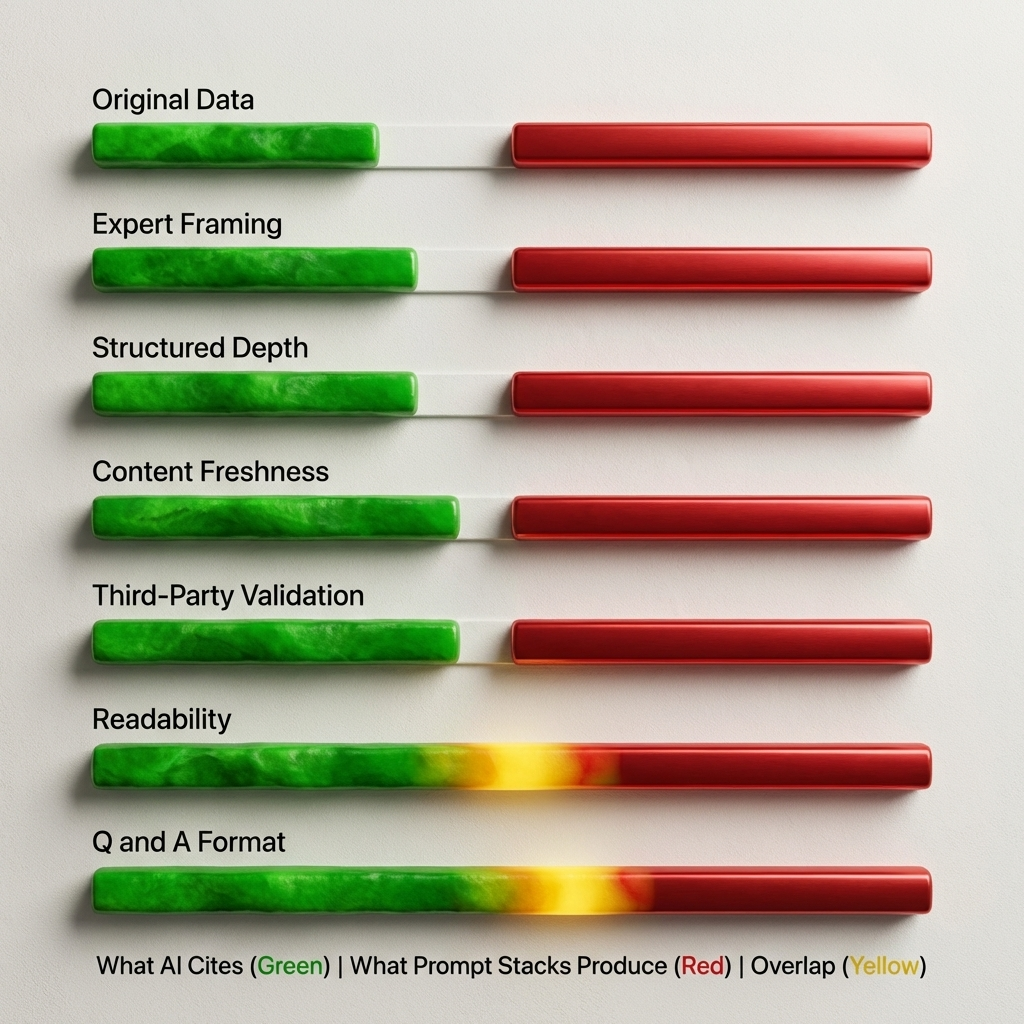

How Wide Is the Content Quality Gap AI Search Engines Enforce?

Prompt-stack content fails on five of seven citation signals tracked across AI search platforms, including original data, expert framing, and structured depth.

| Citation Signal | What AI Cites | What Prompt Stacks Produce | Gap |

|---|---|---|---|

| Original data/research | First-party stats, proprietary frameworks | Reformatted competitor data | FAIL |

| Expert framing | Named perspectives, credentialed analysis | Generic authoritative tone | FAIL |

| Structured depth | Sequential H1-H2-H3 with schema (2.8x boost) | Flat keyword-optimized structure | FAIL |

| Content freshness | Updated quarterly (3x citation likelihood) | One-time publish, no update cycle | FAIL |

| Third-party validation | 85% of brand mentions from third-party sources | Zero earned mentions | FAIL |

| Readability/depth | High word count, scannable formatting | Meets word count but low depth | PARTIAL |

| Question-answer format | Direct answers under question headings | Sometimes present | PARTIAL |

Here's the irony no one talks about. People use AI to create content that AI search engines won't cite. This isn't a criticism of the tools. They're highly capable. The strategy driving them is what's missing.

Otterly.AI reports that only 30% of brands maintain visibility across consecutive AI answers. Prompt-stack content, without update cycles or third-party validation, falls out of consideration almost immediately after publication.

Why Does AI-Generated Content Fail AI Search's Quality Filters?

AI search engines cross-reference claims across multiple sources, rewarding content backed by verifiable data and real expertise while filtering out recycled information.

AI search engines don't index content the way Google does. They evaluate three specific qualities:

- Verifiable claims - Can the statement be cross-referenced against authoritative sources?

- Source diversity - Does this perspective appear across multiple independent entities?

- Information novelty - Does this piece add something genuinely new to the topic?

Understanding these differences is the first step toward getting cited by Perplexity and other AI platforms.

Third-Party Validation Outweighs Self-Publishing

Brands are 6.5x more likely to be cited through third-party sources than their own domains. AI platforms trust what others say about you far more than what you say about yourself.

Prompt stacks can't bridge this gap. They can't conduct original research or field surveys. They can't inject real customer data or specific case studies. They can't provide expert commentary backed by actual credentials. And they can't build third-party validation through earned media or community presence.

The Technical Barrier

The technical gap is equally steep. 73% of sites have technical barriers blocking AI crawler access. Prompt stacks ignore crawlability entirely. They don't generate schema markup or structured data. Even well-written AI content stays invisible when the technical foundation is missing.

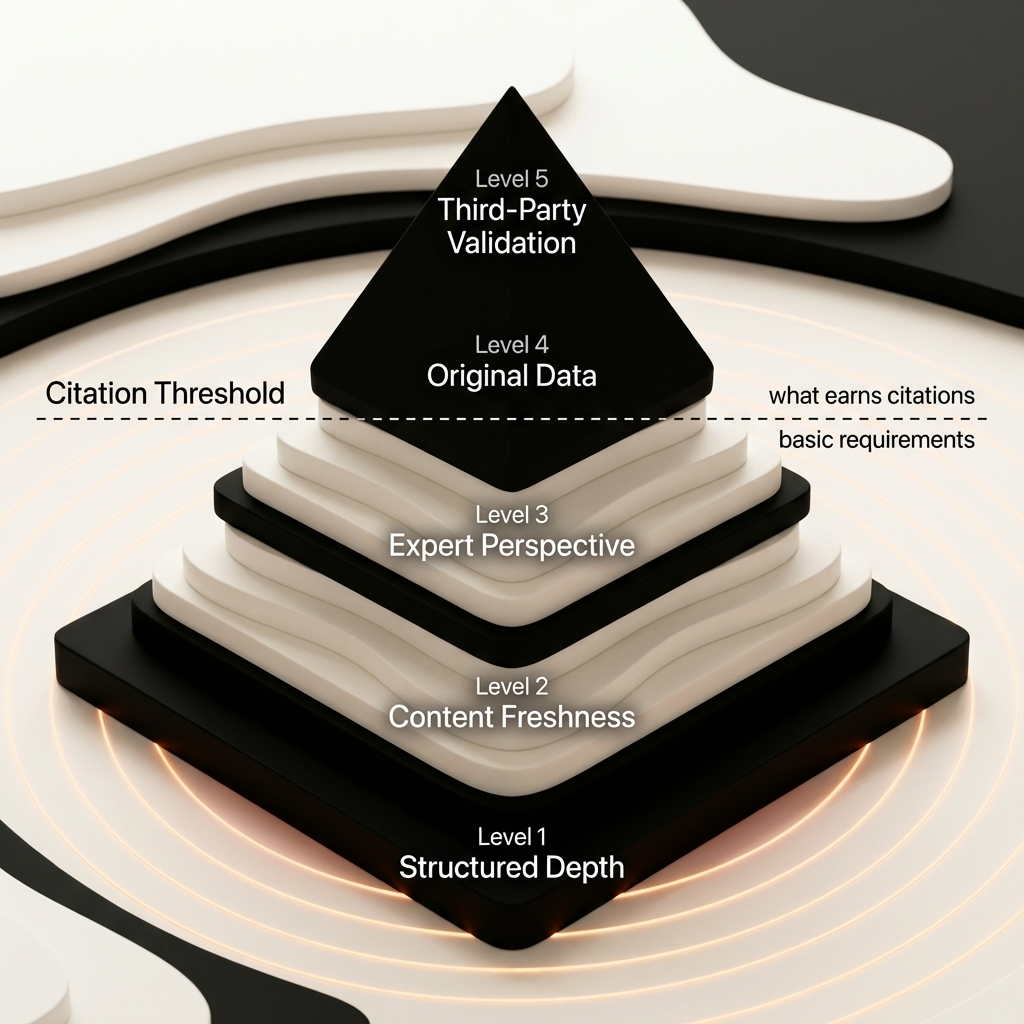

What Content Crosses the AI Citation Threshold?

Content that earns AI citations combines original frameworks, data-backed claims, named expert perspectives, and structured formatting updated on a quarterly cycle.

We use a five-part Citation Threshold Framework to drive AI visibility for our clients. Every piece of content must meet these requirements:

The Citation Threshold Framework

- Original data or frameworks - First-party research, proprietary methodology, or unique analysis not found elsewhere

- Expert perspective with credentials - Named authors, quoted specialists, credentialed analysis that AI can verify

- Structured depth - Sequential headings, 3+ schema types, scannable lists that algorithms parse easily

- Third-party validation - Active community presence on Reddit and LinkedIn, earned media, authority backlinks

- Freshness cycle - Quarterly updates at minimum. Pages that skip quarterly refreshes lose citations 3x faster

What Gets Cited vs. What Gets Ignored

An original benchmark study with its own methodology and proprietary data tables gets cited repeatedly because it provides new facts. A generic "Top 10 Tools" listicle copying feature descriptions from product pages gets ignored entirely.

A deep analysis from a named author with practitioner quotes and a proprietary framework crosses the threshold. A "Complete Guide" covering the same information already on Wikipedia gets filtered out. AI algorithms optimize for novel information gain.

The content quality gap AI search reveals isn't about AI vs. human content creators. It's about strategy-less AI vs. strategy-driven AI. When real expertise guides execution, content crosses the citation threshold consistently. This is the core difference between traditional SEO and AI search optimization.

Frequently Asked Questions About AI Search Content Quality

These are the most common questions content marketers ask about the content quality gap AI search engines create.

Can AI-generated content get cited by ChatGPT or Perplexity?

Yes, but only when it's built on original data, expert input, and proper structure. The tool matters less than the strategy and source material behind it.

Why doesn't my Google-ranked content appear in AI search?

AI search engines evaluate content differently than Google. 59.6% of AI Overview citations come from URLs not in the top 20 organic results. Ranking doesn't guarantee citation.

What's the fastest way to improve AI citation rates?

Start with an AI content audit of your highest-traffic pages. Add original data, update quarterly, implement schema markup, and build structured answer capsules under question headings.

Are Claude SEO prompt stacks completely useless?

Prompt stacks handle traditional SEO tasks well: competitor research, technical audits, keyword mapping. They fall short for AI citation optimization, which requires original expertise and structured depth.

How does Cintra track what AI search engines cite?

We use Peec.ai to monitor brand visibility across ChatGPT, Perplexity, and AI Overviews. We track citation rates, mention frequency, and competitive positioning in real time.

Conclusion

The content quality gap AI search engines enforce is structural, not cosmetic. SEO prompt stacks generate volume but deliver zero AI visibility. They fail five of seven core citation signals.

The real gap isn't between AI and human content creators. It sits between strategy-less AI output and strategy-driven AI execution. Content freshness, original data, and third-party validation are the three highest-impact citation signals you can build.

Audit your top 10 pages against the Citation Threshold Framework today. Count how many of the five requirements each page meets. You'll see exactly where your content falls short.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

How to Fix Wrong AI Answers Brand Owners Get (2026 Guide)

Fix wrong AI answers brand owners encounter with this 6-step playbook. Learn why ChatGPT, Perplexity, and AI M…

Video Content for AI Visibility: The Data-Driven Playbook

41% of AI-cited videos have fewer than 1,000 views. Learn why video content for AI visibility depends on struc…

AI Visibility for Personal Brands: How Founders Get Recommended by ChatGPT

AI visibility for personal brands determines which founders get recommended. Learn the data-backed playbook to…