How to Fix Wrong AI Answers Brand Owners Get (2026 Guide)

Fix wrong AI answers brand owners encounter with this 6-step playbook. Learn why ChatGPT, Perplexity, and AI Mode misrepresent brands and how to correct it.

TL;DR

Need to fix wrong AI answers brand buyers see? It's not a PR problem. It's a content engineering problem. This playbook gives you the exact 6-step process to correct what ChatGPT, Perplexity, and Google AI Mode say about your company, with platform-specific timelines and measurable success criteria.

89% of B2B buyers now use generative AI during their purchasing process. When a buyer asks ChatGPT about your product and gets wrong pricing, outdated features, or a competitor's name instead of yours, that's revenue walking out the door.

This isn't hypothetical. AI hallucinations cost businesses $67.4 billion in 2024. And 38% of all LLM errors are factual incorrectness, according to the largest empirical study of user-reported hallucinations published in Scientific Reports. The kind of errors that directly damage brands.

We built this playbook because we fix wrong AI answers brand owners bring to us every week. Here's exactly how it works, why correction timelines differ between platforms, and what you can do starting today.

Why Does AI Get Your Brand Wrong?

AI platforms build brand profiles from two sources: training data snapshots and real-time web retrieval. Both can contain outdated, incomplete, or conflicting information about your company.

Training data is the foundation of models like ChatGPT and Claude. These models learn from massive crawls of the internet, frozen at a specific point in time. If your brand changed pricing six months ago, the training data might still reflect the old number. There's no inbox to email, no form to submit. The information is baked in until the next training cycle.

Real-time retrieval powers platforms like Perplexity and Google AI Mode. These systems search the live web for every query, then synthesize answers from what they find. They're more current, but they're only as accurate as the sources they pull from. If a third-party review site lists your old pricing and outranks your actual pricing page, the AI serves the wrong answer.

This creates what researchers call an "Entity Clarity Deficit." When AI can't find a single, authoritative source for basic facts about your brand, it fills the gaps with whatever conflicting signals it finds. Imagine a SaaS company that charges $49/month, but Capterra still says $99 and a blog from 2024 says $79. The AI picks one. It's often wrong.

The Scientific Reports study analyzed 3 million user reviews from 90 AI apps and found that factual incorrectness accounts for 38% of all user-reported hallucinations. Not vague errors. Specific, verifiable wrong facts. Understanding what AI visibility actually means is the first step toward fixing it.

What Are the Five Types of AI Brand Errors?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

AI brand errors fall into five distinct categories. The fix depends on identifying which type you're dealing with.

Wrong Pricing or Features

AI cites outdated or third-party pricing instead of your actual numbers. This is the most common error for SaaS and ecommerce brands. A prospect hears "$199/month" from ChatGPT when you actually charge $99. They never visit your pricing page. They pick the competitor that "costs less."

Competitor Confusion

AI merges two similar brands into one profile or attributes your competitor's features to your brand. This happens with similar names, overlapping product categories, or brands that compete for the same search queries. We've seen brands lose deals because ChatGPT credited their core feature to a competitor.

Outdated Information

Your brand pivoted, rebranded, or changed its offerings, but AI still references old positioning. For training-based models, this is a lag problem. For retrieval-based models, it means old content still outranks new content in AI's source selection.

Hallucinated Facts

AI fabricates product capabilities, partnerships, or certifications that don't exist. This is how Air Canada got taken to a tribunal. Their chatbot invented a bereavement fare policy. The BC Civil Resolution Tribunal ruled Air Canada liable for the fabricated information in February 2024. If AI can fabricate airline policies, it can fabricate facts about your brand too.

Negative Sentiment Amplification

AI disproportionately surfaces negative reviews, complaints, or controversy. Your brand has 4.8 stars across 2,000 reviews, but the three one-star reviews with detailed complaints get cited because they contain more extractable detail. This is the hardest category to fix because it reflects real content, just weighted incorrectly.

| Error Type | Example | Primary Cause | Fix Difficulty |

|---|---|---|---|

| Wrong pricing/features | "They charge $99/mo" (actual: $49/mo) | Third-party sources, training data | Medium |

| Competitor confusion | Brand A features attributed to Brand B | Weak entity signals | Hard |

| Outdated information | Old product name, discontinued feature | Training data lag | Medium |

| Hallucinated facts | Fabricated partnership or certification | Insufficient source material | Easy-Medium |

| Negative sentiment amplification | Complaints weighted over positive reviews | Web content distribution | Hard |

How Long Does Each AI Platform Take to Correct Brand Information?

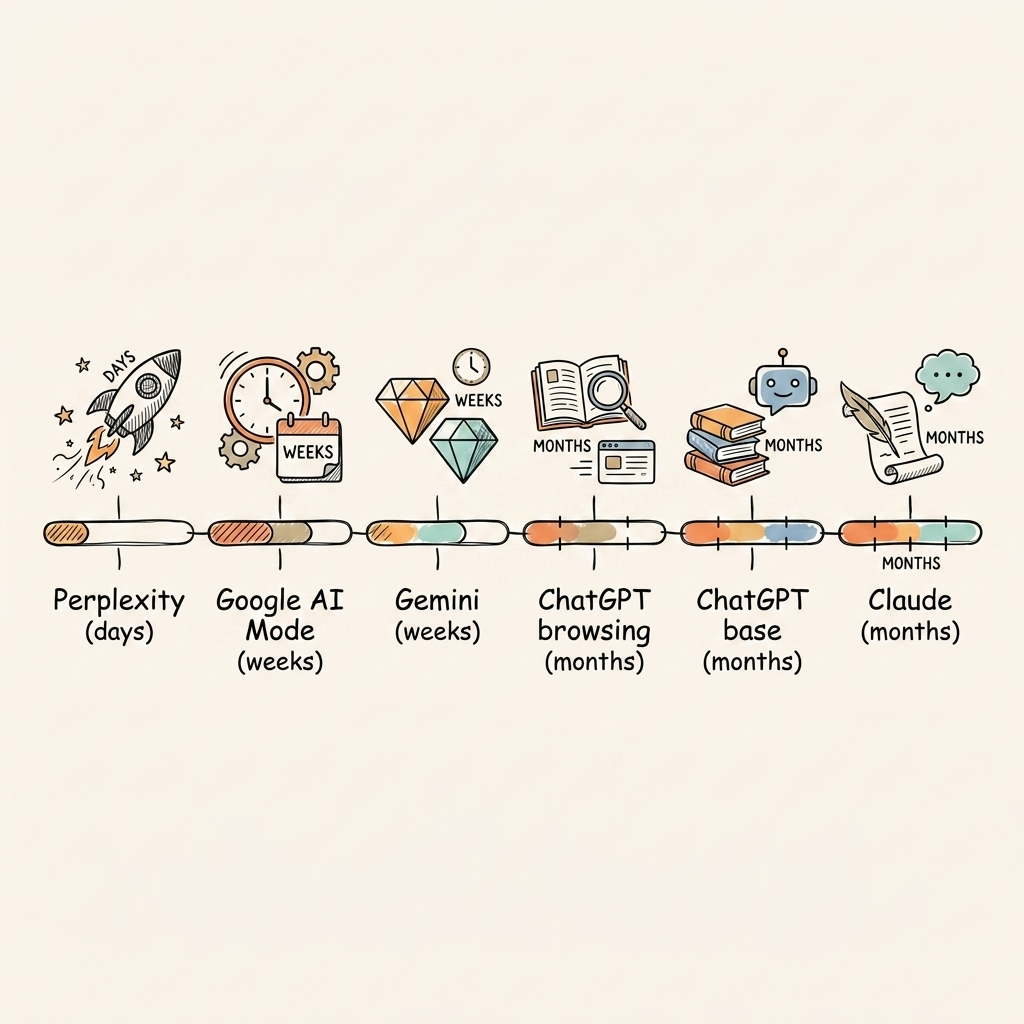

Correction timelines range from days for retrieval-based platforms to months for training-based models. Understanding the architecture behind each platform helps you set realistic expectations and prioritize your efforts.

Perplexity and Google AI Mode search the live web on every query. Fix the source content, and the AI answer can change within days. If your corrected pricing page ranks well and carries strong authority signals, Perplexity picks it up on its next retrieval. Google AI Mode follows similar patterns, grounded in Google Search results.

ChatGPT is more complicated. It has a web-browsing mode that uses real-time retrieval, but many users interact with the base model that runs entirely on training data. Even when browsing mode triggers, ChatGPT prioritizes its training knowledge unless retrieval results are overwhelmingly different. Corrections here take 2 to 8 weeks for browsing mode, and 3 to 6 months for the base model to catch up.

Claude relies almost entirely on training data with no consumer-facing web browsing. Corrections only take effect when Anthropic releases a new model version with updated training data. Plan for 3 to 6 months.

Gemini is hybrid. It leans on Google Search signals heavily, so corrections tied to Google rankings show results faster. Expect 1 to 6 weeks depending on query type.

| Platform | Architecture | Correction Timeline | What You Control |

|---|---|---|---|

| Perplexity | Retrieval-first (RAG) | Days to 2 weeks | Source content ranking, freshness |

| Google AI Mode | Search-grounded | 1-4 weeks | SEO signals, structured data |

| ChatGPT (browsing) | Hybrid | 2-8 weeks | Source authority, web presence |

| ChatGPT (base) | Training data | 3-6 months | Long-term content authority |

| Claude | Training data | 3-6 months | Published content quality |

| Gemini | Hybrid | 1-6 weeks | Google Search signals |

Start with retrieval-based platforms. Perplexity and Google AI Mode offer the fastest ROI on correction efforts. Then build the long-term authority that training-based models need.

How Do You Fix Wrong AI Answers Brand Buyers Encounter?

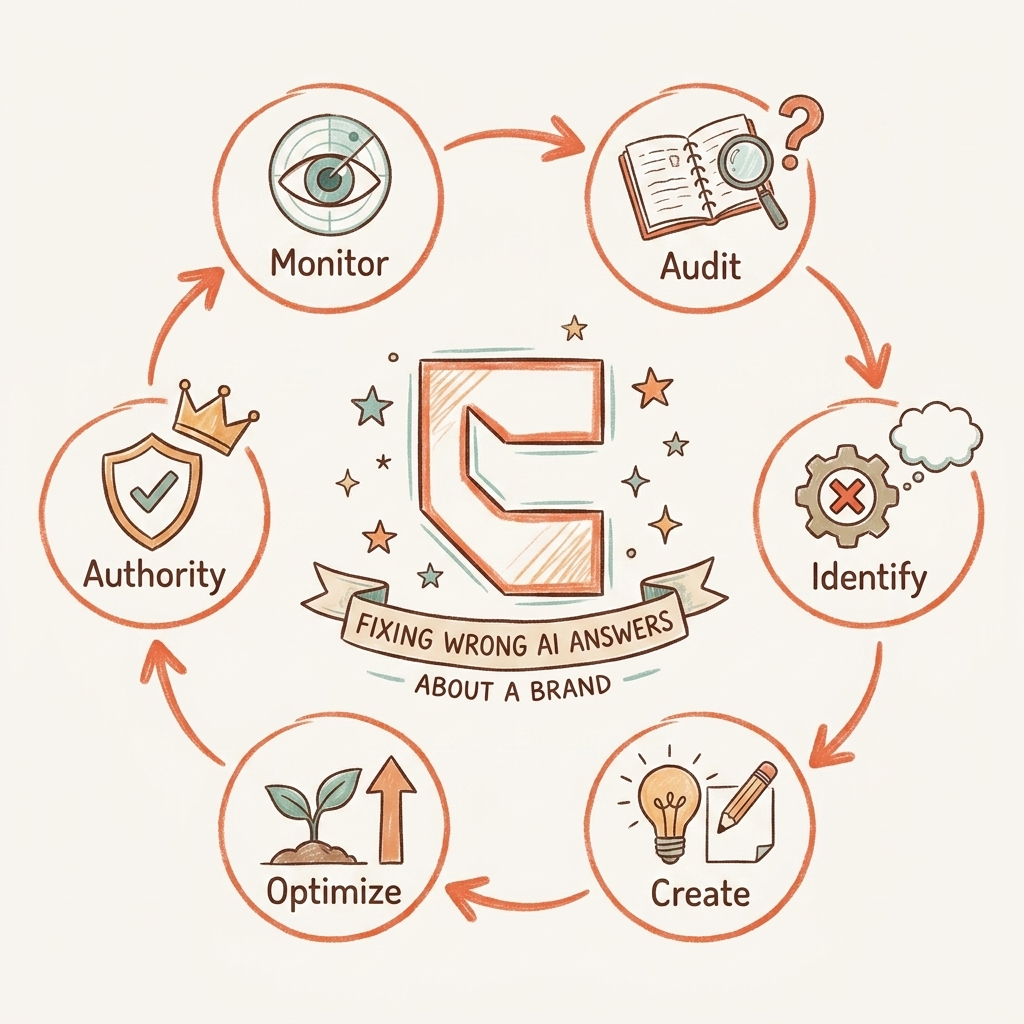

Fix wrong AI answers by auditing current outputs, identifying the misinformation source, creating correction content, optimizing for AI crawlers, building external signals, and monitoring for drift.

Step 1: Audit What AI Currently Says

Step 2: Identify the Source of Misinformation

Every wrong AI answer has a source. Three possibilities:

- Your own website has outdated or ambiguous information

- Third-party sites (review aggregators, old press releases, competitor comparisons) have wrong data

- Training data contains errors with no clear live web source

Each requires a different strategy. Option 1 is the fastest fix. Option 3 requires the most patience.

Step 3: Create Authoritative Correction Content

Update your own pages with clear, unambiguous factual statements. Add FAQ sections that directly address the wrong AI answer using the exact phrasing a buyer would use. Implement schema markup to make facts machine-readable. When AI can extract structured product data from your page, it's less likely to fabricate alternatives.

Step 4: Optimize for AI Crawlers

Implement an llms.txt file that tells AI crawlers what your brand is and what information matters most. Submit updated pages to IndexNow for fast re-indexing. Verify your robots.txt allows AI crawlers: GPTBot, PerplexityBot, ClaudeBot, and Google-Extended. Blocking these crawlers is one of the most common reasons AI has stale brand information.

Step 5: Build External Authority Signals

Your website alone isn't enough. AI weighs consensus. Earn media mentions that reinforce correct brand facts. Update third-party profiles on G2, Capterra, and Trustpilot. Publish thought leadership on industry sites. Build the kind of E-E-A-T signals and authoritative backlinks that make AI treat your brand as the primary source of truth about itself.

Step 6: Set Up Ongoing Monitoring

AI answers drift. A correct answer today can degrade next month when new training data introduces conflicting sources. Set up monthly monitoring across all platforms. Track whether corrections hold. We cover the full measurement framework here.

What Happens When Competitors Weaponize AI Against Your Brand?

Competitors can influence AI answers through misleading comparison pages, review manipulation, and content designed to create negative brand associations in training data.

Misleading Comparison Pages

A competitor publishes a "Brand A vs. Brand B" page that misrepresents your features, understates your capabilities, or inflates your pricing. AI citations pull from these pages as if they're neutral third-party comparisons. The fix: publish your own comparison content that's more authoritative, better structured, and optimized for AI extraction.

Review Manipulation

Coordinated negative reviews create a content signal that AI amplifies. The FTC's Operation AI Comply is already cracking down on deceptive AI practices, including fake reviews and manipulated AI outputs. Regulators are catching up, but your best defense right now is volume. Genuine positive reviews and detailed customer success stories dilute the signal from manipulated content.

The Best Defense Is Proactive AEO

The most effective protection against competitor manipulation is building a web presence so authoritative that competitor content can't override the consensus. This is what separates AI Engine Optimization from reactive reputation management. When 15 authoritative sources agree on your brand facts and one competitor page disagrees, AI goes with the consensus every time.

How Do You Measure Whether AI Answers About Your Brand Are Fixed?

Measure AI brand accuracy through share-of-voice benchmarks, citation accuracy scores, and before-and-after tracking across every major AI platform.

Share-of-voice tracking: What percentage of relevant buyer queries return your brand as a recommendation? Track this monthly across ChatGPT, Perplexity, Google AI Mode, Claude, and Gemini. If your brand appeared in 3 out of 20 relevant queries last month and 8 out of 20 this month, that's measurable progress.

Citation accuracy score: Of the times AI mentions your brand, what percentage is factually correct? This is a different metric from share of voice. You can be mentioned frequently but inaccurately. Test 20 common buyer queries. Grade each AI answer as Correct, Partially Correct, Incorrect, or Not Mentioned. Calculate your accuracy rate.

Before-and-after baseline: The audit from Step 1 becomes your benchmark. Re-run the same queries monthly. Track improvement over time. For retrieval-based platforms, expect improvement within weeks. For training-based platforms, expect gradual gains over 3 to 6 months. Our measurement guide walks through the full framework.

Frequently Asked Questions About Fixing Wrong AI Answers

These are the questions brand owners ask most when they discover AI is misrepresenting their company.

Can I Contact ChatGPT Directly to Fix Wrong Answers?

No. OpenAI does not offer a brand correction portal. The only way to change what ChatGPT says is to change the underlying content it learns from. That means fixing your website, correcting third-party sources, and building enough web authority that future training data reflects the right information.

How Long Does It Take for AI to Update After I Fix My Website?

Retrieval-based platforms like Perplexity can update within days. Google AI Mode typically follows within 1 to 4 weeks. Training-based platforms like ChatGPT (base model) and Claude may take 3 to 6 months until the next training data refresh includes your corrections.

Does Fixing My Website Actually Change What AI Says?

Yes, but only if your website is authoritative enough for AI to prioritize it over competing sources. A pricing page with strong E-E-A-T signals, structured data, and external citations gets weighted higher than a random blog post listing your old pricing.

What If the Wrong Information Is in Training Data I Can't Access?

Build overwhelming web consensus with correct information. When enough authoritative sources agree on the same facts, AI's retrieval systems override stale training data for most queries. This doesn't happen overnight, but it's the most reliable long-term fix.

What to Do Next

You can fix wrong AI answers brand buyers encounter. It's a content engineering problem, not a crisis. Three things to remember:

- Retrieval-based platforms fix fastest. Start with Perplexity and Google AI Mode for quick wins.

- The 6-step playbook is sequential. Audit first. Fix sources second. Build authority third.

- Monitoring prevents backsliding. AI answers drift. Monthly checks catch new errors before they cost you deals.

Start today: Search your brand name on ChatGPT, Perplexity, and Google AI Mode. Screenshot every wrong answer. That's your audit baseline.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

Video Content for AI Visibility: The Data-Driven Playbook

41% of AI-cited videos have fewer than 1,000 views. Learn why video content for AI visibility depends on struc…

AI Visibility for Personal Brands: How Founders Get Recommended by ChatGPT

AI visibility for personal brands determines which founders get recommended. Learn the data-backed playbook to…

Best AI Visibility Agencies for B2B Companies (2026)

50% of B2B buyers now start vendor research in AI chatbots. We ranked the best AI visibility agencies for B2B…