AI Marketing Agents: How Agent-Native Agencies Deliver Incredible 50x Output

AI marketing agents are replacing headcount-limited agency models. Learn the three-layer architecture behind agent-native agencies and a 30-day transformation roadmap.

TL;DR

- Traditional agencies spend 60-70% of revenue on labor and cap output at ~10 pieces per writer per month. The headcount model doesn't scale.

- AI marketing agents aren't chatbots. They're autonomous systems that research, write, generate images, validate SEO, and publish, end to end.

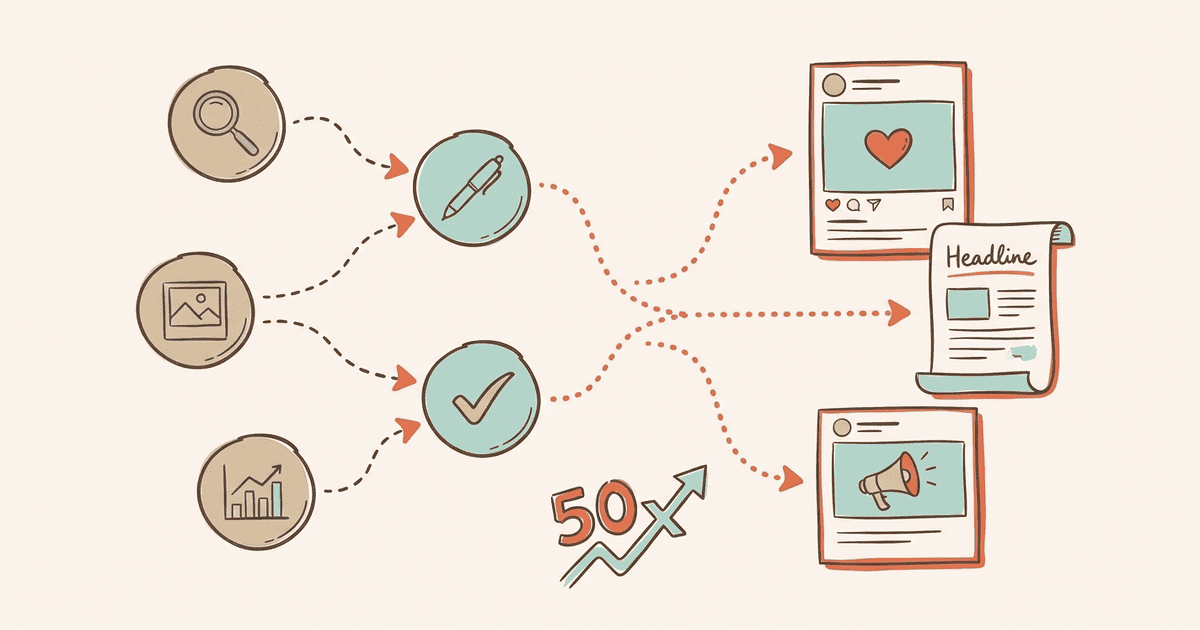

- The agent-native model has three layers: humans set strategy, AI orchestrates, deterministic systems execute. Same expertise, 50x the output.

- Agents maintain brand voice through isolated client knowledge bases, every task references the client's guidelines, terminology, and approved examples.

- The real shift: agents don't just produce faster. They produce content built for AI citations, answer capsules, structured data, entity coverage, and iterate on visibility data each cycle.

The average marketing agency spends 60 to 70 percent of revenue on labor. Every new client demands a new hire. Revenue scales with headcount, and nothing else.

AI tools helped at the margins. They're still assistants, though, not workers. You need a person to prompt, edit, format, and publish every piece. The bottleneck moved; it didn't disappear.

A different model exists. One where humans set strategy and agents handle everything downstream, research, writing, image generation, SEO validation, publishing. We built Cintra around this model from day one. The result: 50x the output of a traditional agency with the same strategic oversight. Here's how it works.

Why Do Agencies Hit a Headcount Wall?

Traditional agencies scale revenue by adding headcount, spending 60 to 70 percent of revenue on labor and capping output at roughly 10 content pieces per writer per month.

The math breaks down fast at scale. A 20-person content team produces roughly 200 pieces a month at a labor cost of $120,000 to $160,000, based on average salaries of $6,000 to $8,000 per writer. Margins compress as client rosters grow. Every dollar of new revenue requires an almost equal investment in human capital. According to Gartner's 2025 CMO Spend Survey, 39% of CMOs are actively cutting agency allocations and labor budgets, the pressure to do more with less has never been higher. Agencies end up trapped: hiring, training, replacing staff just to maintain baseline operations.

Many agencies assumed generative AI platforms would break this ceiling. The reality? Most teams bolted these tools onto broken workflows. The DIY vs agency AI visibility comparison shows that tools speed up drafting, but they don't eliminate the management overhead. A writer still researches the topic, formulates the prompt, reviews the output, formats the headers, sources the images, and stages the CMS entry. The workflow stays human-centric.

Adding a chatbot to a slow process creates a slightly faster slow process. You still need one person managing every distinct task. If a writer goes on vacation, production stops. If a client doubles their content volume, you hire another writer.

The question for agency owners has shifted. It's no longer whether to use AI. It's whether to bolt isolated tools onto an outdated model, or build a new architecture around autonomous systems.

What Makes AI Marketing Agents Different From AI Tools?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

AI marketing agents are autonomous, multi-step systems that research, write, validate, and publish content without human intervention at each step, unlike AI tools that generate one output per prompt.

Think of it as a spectrum of autonomy. A prompt generates a single output: you ask a question, you get a paragraph. A chain links multiple outputs together: the result of one prompt informs the next. Both require constant human supervision. An agent operates differently, it receives a high-level goal and executes the required multi-step workflow on its own. A multi-agent system coordinates specialized agents across different domains.

Here's the operational difference. A standard tool requires you to ask for an outline, review it, ask for a draft, edit it, then manually run an SEO check. An agent works end to end. It researches competitors, drafts content based on that research, generates custom images, validates technical SEO, checks citation signals, and stages the piece for publishing. The human reviews the final product rather than micromanaging each step.

Some commentators dismiss this level of autonomy as marketing fluff. They point to early demos that failed in production. Real agents look different from those experiments. They have observable steps you can audit. Their output is measurable. Their decision logic is deterministic rather than purely probabilistic, running defined workflows rather than winging it with text generation alone. Google DeepMind's research on intelligent AI delegation defines this as "a sequence of decisions involving task allocation that incorporates transfer of authority." Understanding what AI visibility is starts with recognizing this shift from human-prompted text to system-orchestrated output.

Tools wait for instructions. Agents execute processes. This distinction changes the fundamental unit of work in an agency from "hours billed" to "processes executed."

What Does an Agent-Native Agency Look Like?

An agent-native agency operates on three layers: humans set strategy, AI orchestrates workflows, and deterministic systems execute tasks, delivering 50x the output with the same strategic oversight.

The traditional agency hierarchy relies on junior staff executing tasks managed by senior staff. An agent-native architecture flattens this. The model splits into three layers.

Strategy (Human)

People excel at brand positioning. Humans manage complex client relationships. We define content calendars and creative direction, the decisions that give a brand its unique market angle. In the agent-native model, the human element moves up the value chain, not down.

Orchestration (AI)

This layer acts as the traffic controller. It routes incoming tasks to the correct execution path, selects the right data sources, and maintains context across dozens of client profiles simultaneously. If a task needs competitive research, the orchestrator assigns it to a specialized research agent.

Execution (Deterministic Systems)

This is where the actual work happens. Specialized agents conduct research, write drafts, generate images, run SEO validation, and handle publishing through API integrations.

We see this architecture's impact daily. We produce 50x more content than traditional agencies because our humans focus on strategy while our agents handle execution. The actual performance numbers are on our results page.

This differs from being "AI-assisted." In an assisted model, the human sits at the bottom doing the work and asking AI for help. In an agent-native model, the human sits at the top. Humans don't prompt agents. They set business objectives. Agents figure out the execution.

| Dimension | Traditional Agency | Agent-Native Agency |

|---|---|---|

| Output per writer | ~10 pieces/month | 50x content volume |

| Revenue model | Scales with headcount | Scales with compute |

| Labor cost | 60-70% of revenue | Strategy-focused team only |

| Content type | Human-drafted, human-optimized | Agent-drafted, agent-optimized, human-reviewed |

| Speed to publish | 2-4 weeks per piece | Hours per piece |

| AI visibility optimization | Manual (if any) | Built into every piece |

How Do Agents Maintain Brand Voice Across Multiple Clients?

Agents maintain brand voice through client-specific knowledge bases that store brand guidelines, terminology, audience data, and approved examples, referenced on every task.

Generic AI output sounds generic. It uses the same predictable cadence and vocabulary regardless of the brand. Solving this requires client knowledge isolation. Every brand needs a dedicated profile containing voice guidelines, competitor landscape mapping, approved terminology glossaries, and customer personas. Agents reference this isolated profile on every task they execute for that client.

The system goes beyond rule-following. Agents don't just check boxes on a style guide. They reference approved examples of past content and pattern-match against the brand's best historical assets. If a client prefers short, punchy paragraphs, the agent mimics that structure. If a client bans certain industry buzzwords, the agent checks output against that constraint list before publishing.

Choosing the right AI visibility agency means evaluating this isolation capability. For B2B SaaS teams specifically, agent-native approaches are particularly effective because the buyer journey spans multiple AI platforms, ChatGPT, Perplexity, and Copilot each play distinct roles, as detailed in the AI search for B2B guide. Terminology from a software client can't bleed into retail brand content. The architecture must enforce boundaries between client data environments.

Quality control provides the final safety net. Human review at the strategy layer catches voice drift before content reaches a live server. Because the humans aren't exhausted from writing the content themselves, they review with sharper editorial judgment. They act as senior editors, not tired drafters, catching subtle nuances that automated systems occasionally miss.

How Do AI Marketing Agents Improve AI Visibility?

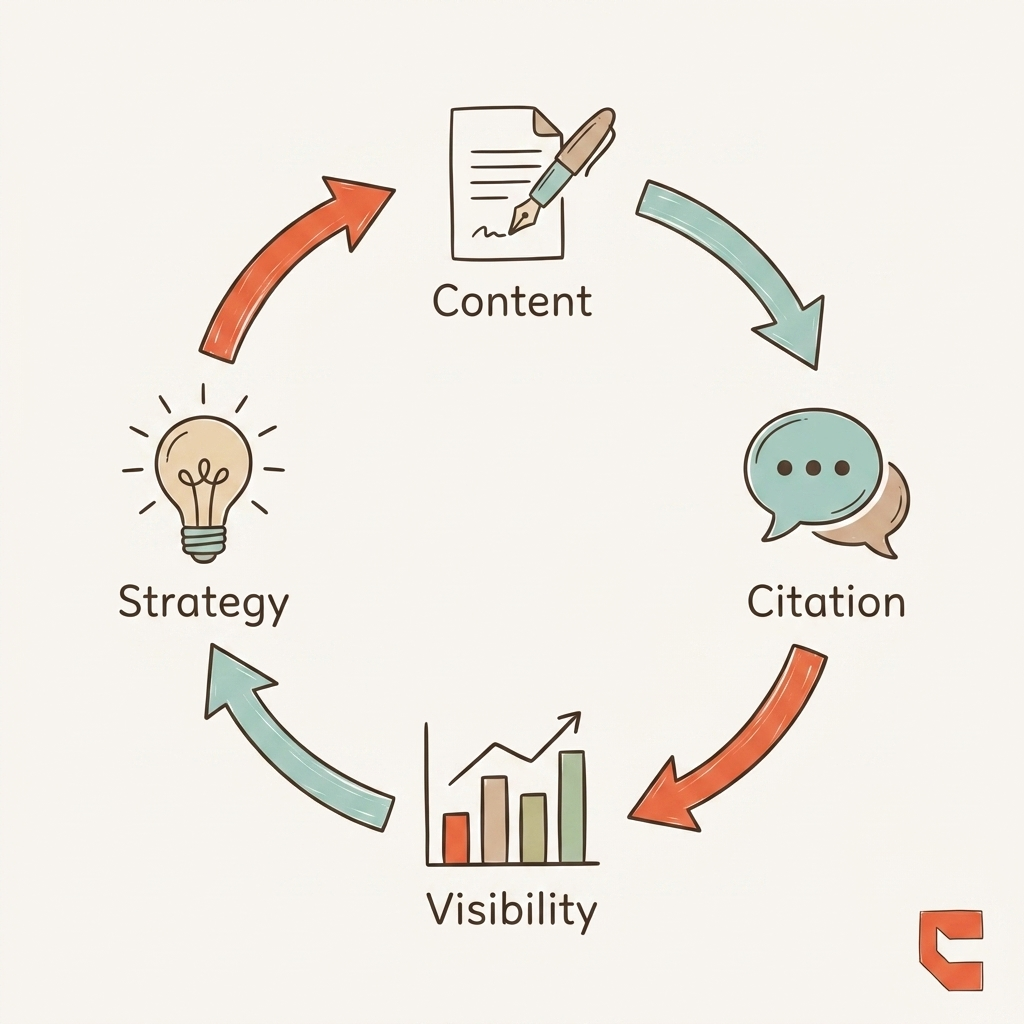

AI marketing agents produce content built for AI-generated citations, checking citation signals, optimizing for answer engines, and iterating based on visibility data in every content cycle.

Producing content faster only matters if people see it. Traditional agencies measure success by output quantity. Agent-native agencies measure success by citations. The difference between speed and performance defines this model.

Every piece goes through a visibility audit before human review. Agents verify structured data schemas, confirm answer capsules are positioned correctly, check that headings use question formats preferred by language models, and validate entity coverage against top-ranking competitors.

You can run this AI content audit manually. It takes hours per article. Agents run it in seconds. They follow the complete AI visibility playbook without skipping steps.

This creates a compounding feedback loop. Agents monitor citation rates across published content. They identify which formats and entities get cited by major language models. That data feeds directly into the next content cycle. If a specific table structure wins a citation in Perplexity, agents apply that structure to future articles in that cluster.

The results back this up. Optimized content drives a 23x higher conversion rate compared to standard informational traffic. Our client UV Blocker went from zero to 38,000 clicks in six months and doubled their orders during off-season. Hamming.ai saw 8.5x organic traffic growth in 12 weeks. Keywords.am jumped from 3% to 13% conversion. The detailed AI visibility ROI breakdown covers the revenue attribution behind each of these outcomes.

Most industry conversations about AI agents focus on production speed. Most conversations about AI visibility focus on manual optimization. The overlap, agents that optimize for citation at scale, is where the real commercial value sits. And it's wide open.

The 30-Day Agency Transformation Roadmap

Transform your agency in four weeks: audit workflows, build client knowledge bases, deploy pilot agents, and measure output quality and citation rates.

You don't need a multi-year development cycle. You can restructure core operations in a single month.

Week 1: Audit Current Workflows

Map every step in your content pipeline. Identify agent-ready tasks, repetitive, multi-step processes that follow documented rules. Anything with predictable inputs and defined outputs is a candidate. Content production usually represents the fastest path to ROI.

Week 2: Build Client Knowledge Bases

This is the most critical week. Document brand voice profiles. Build terminology glossaries. Map competitor landscapes. Curate approved example content. This data becomes the ground truth your agents reference for every task. Skip this step and agents produce generic text. Invest here and they match your best writer's voice.

Week 3: Deploy Agents on 2-3 Pilot Clients

Don't upend your business overnight. Keep your existing team producing as normal. Let agents run in parallel on the same briefs. Compare results side by side, speed, consistency, and adherence to brand guidelines.

Week 4: Measure and Decide

Track the metrics that matter: output volume per week, content quality scores, citation mention rates (if running AEO), and client approval rates. Once you prove the model works on pilot clients, roll it out across your roster.

Frequently Asked Questions About AI Marketing Agents

AI marketing agents raise practical questions about team structure, investment, and brand safety. Here are the most common ones.

Will AI agents replace agency staff?

No. Agents replace repetitive execution tasks. Humans move to strategy, client relationships, and creative direction, higher-value work.

The shift mirrors what happened with programmatic advertising. The technology automated manual buying and bidding. Strategists who understood the broader market became more valuable, not less. Agent-native agencies need fewer junior production staff, but they invest more in senior strategists and creative directors who guide the systems.

What's the investment to go agent-native?

Initial setup ranges from tooling costs to full implementation. The ROI shows within weeks through increased output volume and reduced per-piece production costs.

Consider the hiring math. A senior content writer costs $6,000 to $8,000 per month and produces roughly 10 quality pieces. Agent infrastructure at similar cost produces 50x that volume with higher technical consistency. For teams looking to scale without the overhead, our Enterprise pricing shows how we deploy this infrastructure for larger organizations.

Can agents maintain brand voice across multiple clients?

Yes, through client-specific knowledge bases that store brand guidelines, terminology preferences, and approved examples, referenced on every task.

This is the hardest technical challenge in the space. Without dedicated knowledge bases per client, autonomous systems default to generic voice. The quality of input data directly determines output quality. When you isolate client data and use style transfer against approved examples, the consistency issues resolve.

How do AI marketing agents improve AI visibility specifically?

Agents build citation signals into every content piece, answer capsules, structured headings, entity coverage, and iterate based on visibility data.

Traditional content production focuses on search volume and keyword density. Agent-native production bakes AI visibility into the workflow from the start. Every piece is audited for AEO compliance before publishing. Your content is structurally designed to be cited by Perplexity, ChatGPT, and AI Overviews from the moment it leaves the drafting phase.

Conclusion

The agency model built on headcount isn't broken, it's simply out of runway. Every new client that walks in the door creates a hiring conversation. Every piece of content requires a human to research, prompt, edit, and publish. AI tools helped at the edges. Agent-native architecture removes the ceiling entirely.

The shift is straightforward: humans own strategy, agents execute everything downstream. Client knowledge bases enforce brand voice across every content piece. Visibility audits run automatically before human review, not as an afterthought. Content is structurally designed for AI citations from the first draft, not retrofitted after the fact.

According to a March 2026 Gartner survey, 63% of marketing leaders are actively evaluating or deploying AI agent infrastructure this year. Agencies that make this transition now won't just produce more output, they'll produce content that compounds over time, earning AI citations, driving brand discovery, and delivering measurable revenue impact each cycle.

The 30-day roadmap is your starting point. Map your workflows in week one. Build client knowledge bases in week two. Deploy your first pilot agents in week three. Measure and scale in week four.

The gap between headcount-bound agencies and agent-native agencies is widening every quarter. The brands that close it now lock in a structural advantage that gets harder to replicate over time.

See how we've applied the agent-native model for our clients, and what results it's driven across SaaS, ecommerce, and professional services.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

AI Visibility Reporting: What to Track, Who to Tell, and How to Prove ROI

AI visibility reporting requires different metrics and formats than SEO reports. Learn the five core metrics,…

AI Visibility Competitive Analysis: How to Find and Exploit Gaps in 2026

AI visibility competitive analysis across ChatGPT, Perplexity, and 5 other engines. Framework for tracking com…

Google Search Agents: What the I/O 2026 Announcement Means for Your Brand

Google search agents monitor topics 24/7 and push recommendations to 1B+ users. Learn what information agents…