How AI Models Read Websites: ChatGPT, Claude, Perplexity & Gemini Compared

How AI models read websites differs by platform. See how ChatGPT, Claude, Perplexity, and Gemini fetch, filter, and cite your content – with a side-by-side comparison table.

TL;DR

How AI models read websites varies wildly by platform. ChatGPT reads a Bing-filtered version. Claude reads a paraphrased markdown summary. Perplexity fetches your page live. Gemini uses Google Search grounding. Same website, five different versions. Optimize for the common denominators: clean HTML, strong headings, and front-loaded answers.

Understanding how AI models read websites is now a baseline requirement for any brand investing in content. ChatGPT never sees your actual webpage, it reads a Bing-filtered version. Claude reads a paraphrased markdown summary. Perplexity might be the only model that fetches your page directly.

Brands optimize for "AI" without knowing each model processes content differently. Content that performs well on one model gets ignored by another. Schemas, meta tags, and JavaScript are all handled differently across platforms.

We break down the full content pipeline for five major AI products, ChatGPT, Claude (Code and claude.ai), Perplexity, and Gemini. You'll see exactly what gets fetched, what gets filtered, and what gets cited.

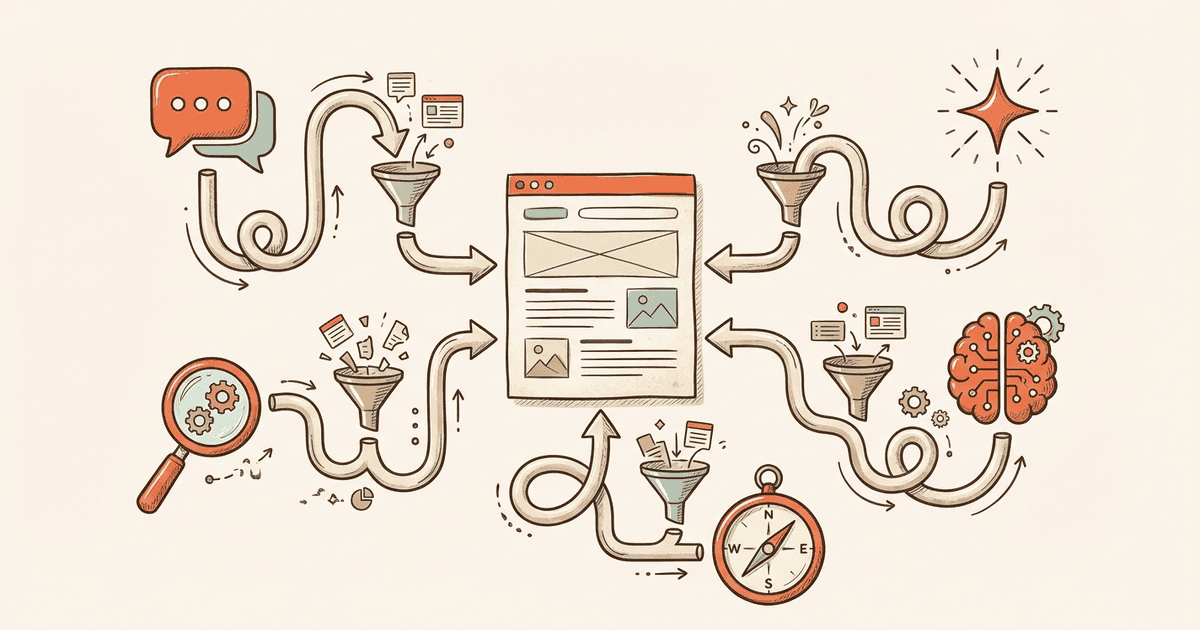

How Does Each AI Model Access Web Content?

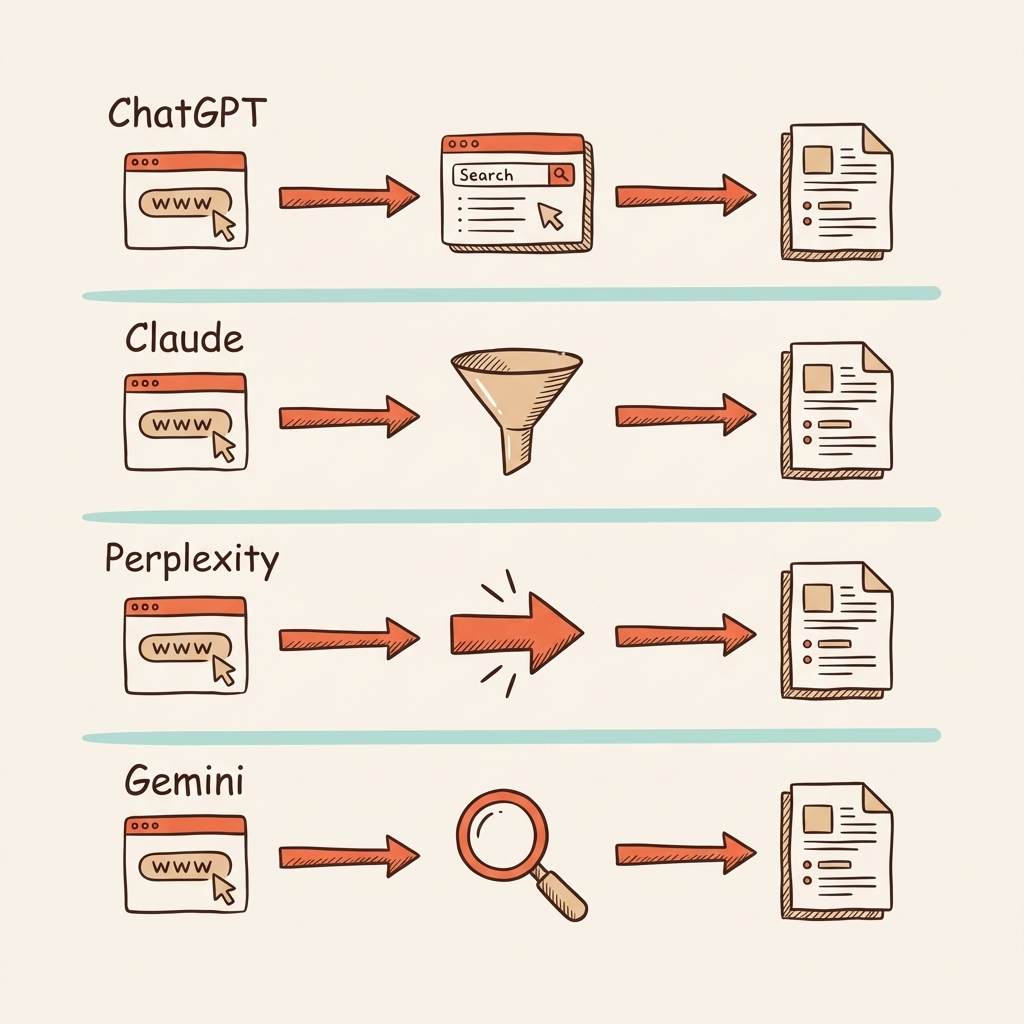

Each AI model uses a different retrieval pipeline, from Bing's index to live crawling to Google Search grounding, meaning the same webpage looks different to every model.

ChatGPT relies on Bing's search index. If your site isn't in Bing, ChatGPT can't see it. The OAI-SearchBot crawler surfaces content for the index. Many brands only optimize for Google and ignore Bing, leaving them invisible to ChatGPT users. Getting recommended by ChatGPT starts with Bing Webmaster Tools.

Claude Code uses a 5-layer pipeline: HTTP fetch, Turndown HTML-to-markdown conversion, 100,000-character truncation, Haiku model paraphrasing, and a 50,000-character tool result budget. Only 107 preapproved documentation sites (react.dev, docs.python.org, etc.) bypass the Haiku step. For everyone else, exact words rarely survive.

Claude.ai takes a different approach. It uses encrypted snippets from server-side search, about 3,500 to 4,000 bytes of plaintext per result. The encrypted content is query-dependent: a different prompt produces different text fragments from the same page. Learn the tactics for getting cited by Claude.

Perplexity fetches pages live using on-demand crawling. No static index. It combines BM25 lexical search with vector embeddings for semantic matching. This real-time approach is Perplexity's key differentiator, it reads what your site publishes right now. See how to get cited by Perplexity.

Gemini uses Google Search grounding. The model generates search queries automatically, processes results, and synthesizes answers with structured grounding metadata. AI Overviews now appear in over 85% of informational queries. Optimizing for AI Overviews is critical for Gemini visibility.

What Gets Filtered Out Before the Model Sees It?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

Every model filters web content before processing. Claude strips JSON-LD and meta tags. ChatGPT applies safe-browsing guardrails. Perplexity ranks and reranks passages by authority scoring.

Claude's Turndown conversion strips JSON-LD data, schema markup, meta descriptions, image alt-text, Open Graph tags, and CSS. Navigation text survives, your site menus then compete with article content for the model's attention. Brands investing in schema markup should know that Claude cannot see any of it.

The Haiku layer adds another barrier. It enforces a 125-character quote maximum and mandates paraphrasing for non-preapproved domains. Your exact words rarely reach the final model. This is one of the most aggressive ways AI models read websites, by rewriting them first. Marketing copy loses its persuasive edge. Technical specifications can lose precision.

ChatGPT applies OpenAI safe-browsing guardrails. The system gathers 20 to 30 Bing results, then an internal mclick function scrapes a smaller subset. Not all results make it through this selection step. Your page might rank well in Bing but still get excluded during this secondary filtering.

Perplexity applies authority scoring, freshness signals, and cross-source validation. Content gets reranked for answer extraction. Citations are embedded during generation, not added in post-processing. Low-authority domains and thin content get filtered before synthesis begins.

Gemini 3 uses query fan-out to perform multiple parallel searches. It filters results for credibility and relevance, then creates custom visual layouts with tables, grids, and images. If your data lacks clear structure, it fails these internal filters.

What Content Actually Reaches the AI Model?

After filtering, the way AI models read websites produces vastly different results, from paraphrased markdown summaries to encrypted snippets to full-text passages ranked by semantic relevance.

Claude Code receives a Haiku-paraphrased markdown summary capped at 50,000 characters. Original phrasing is gone. Preapproved sites keep verbatim content up to 100,000 characters. The 15-minute cache means first impressions matter, whatever version gets cached serves all queries in that window.

Claude.ai processes encrypted snippets of roughly 3,500 to 4,000 bytes per result. Fragment selection is keyword-proximity-driven and query-dependent. The same webpage produces different fragments for different prompts.

ChatGPT reads scraped content from selected Bing results, synthesized with publisher partnership data. OpenAI maintains deals with AP, Reuters, and the Financial Times. These partnerships give certain sources a pipeline advantage over standard web content.

Perplexity reads full passages from live-fetched pages, processed through Retrieval-Augmented Generation with hybrid retrieval. It routes across GPT-4, Claude, LLaMA, and Mistral, optimizing for each query type. The most generous with source content among all models.

Gemini processes Google Search results with detailed grounding metadata, source URIs, page titles, and character-level citation mapping. This precision mapping is unique to Gemini and creates the most granular citation system available.

| Feature | ChatGPT | Claude (Code) | Claude.ai | Perplexity | Gemini |

|---|---|---|---|---|---|

| Retrieval | Bing index | Direct HTTP fetch | Server-side search | Live on-demand crawl | Google Search grounding |

| Processing | mclick scraping from 20-30 results | Turndown to Haiku paraphrase | Encrypted snippets | RAG with hybrid retrieval | Query fan-out + synthesis |

| Content limit | Subset of Bing results | 50K chars (after Haiku) | ~3.5-4KB per result | Full passages (reranked) | Search results + metadata |

| Schema/metadata | Via Bing index | Stripped by Turndown | Not available | Indexed if crawled | Via Google index |

| Citation style | Sidebar + inline links | No web citations (Code) | Encrypted index refs | Inline footnotes + snippets | Character-level mapping |

| Unique advantage | Publisher partnerships | Preapproved site fast-path | Query-dependent fragments | Real-time freshness | Structured grounding metadata |

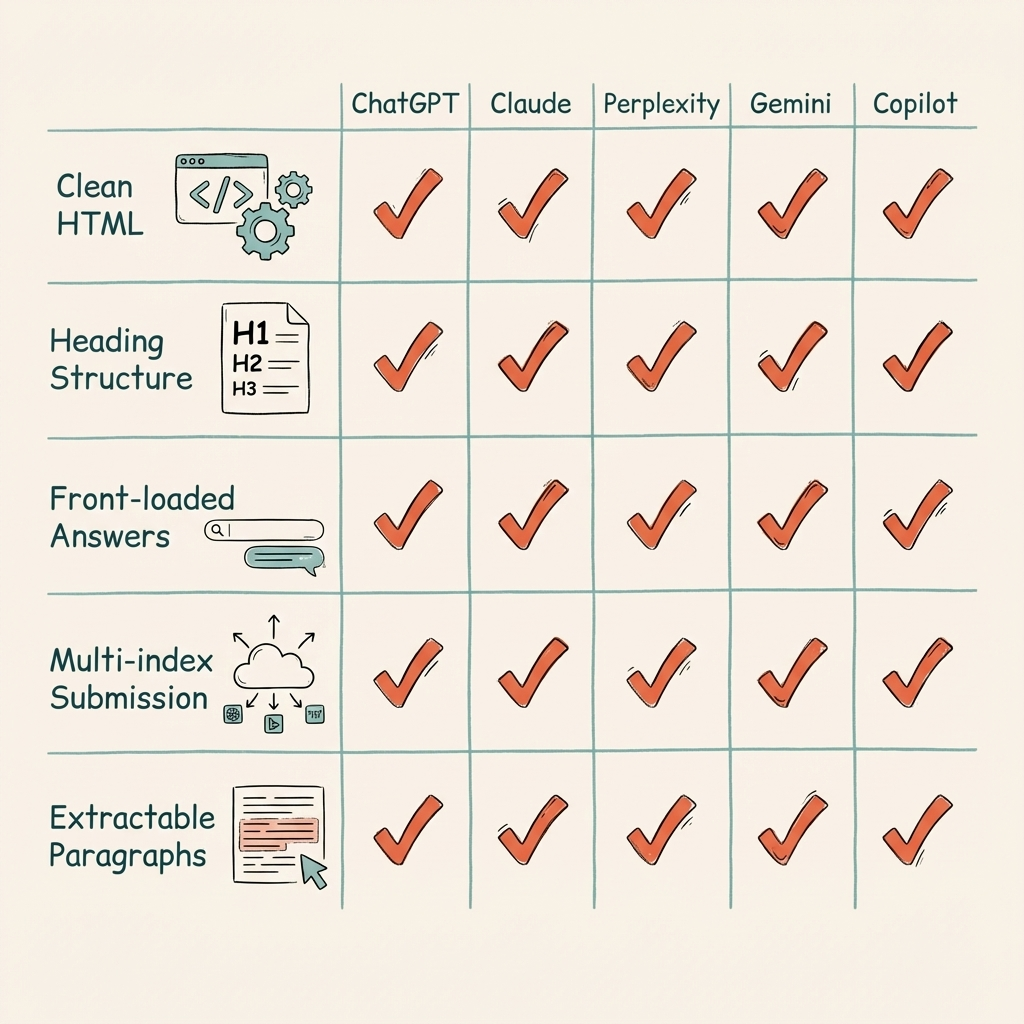

How Do You Get Cited Across All Five Models?

Despite differences in how AI models read websites, all five reward the same content fundamentals: clean HTML, strong heading structure, front-loaded answers, and authoritative sources.

Structure for markdown conversion. Clean semantic HTML with proper heading hierarchy (H1, H2, H3) survives Turndown conversion and helps every model parse your content. This is the single highest-ROI optimization, it benefits all five models.

Front-load answers. Put the direct answer in the first 30 words after each heading. Claude's snippet selection is keyword-proximity-driven. Perplexity's extractive pipeline grabs early passages. Gemini's character-level mapping favors leading content. Models extract the first clear answer they find.

Don't rely on schema alone. Claude strips JSON-LD entirely. ChatGPT and Gemini see schema through their search indexes, an indirect signal. Put critical information in the content body, not just structured data.

Get indexed everywhere. ChatGPT needs Bing indexing. Gemini needs Google indexing. Perplexity runs its own crawler. Submit your sitemap to all three search engines. Skipping Bing Webmaster Tools means invisibility on ChatGPT.

Write for extraction. Short paragraphs under 80 words. Concrete data points. Comparison tables. These elements increase citation selection across every model. Perplexity's inline footnotes link to expandable snippets, extractable content gets more prominent placement.

Frequently Asked Questions About How AI Models Read Websites

These are the most common questions about how AI models read websites, covering schema markup, JavaScript rendering, crawler blocking, and citation differences across ChatGPT, Claude, Perplexity, and Gemini.

Does schema markup help with AI citations?

Schema markup helps indirectly through Bing and Google indexes. Claude strips JSON-LD during HTML-to-markdown conversion, so put key information in body content too.

Gemini and ChatGPT access schema through their search engine partners. Perplexity indexes schema if it crawls your page directly. The safest strategy: duplicate structured data as visible text content.

Why does my content appear in Perplexity but not ChatGPT?

Perplexity fetches pages live via on-demand crawling, while ChatGPT depends on Bing's index. If Bing hasn't indexed your page, ChatGPT cannot access it.

Submit your site to Bing Webmaster Tools and verify OAI-SearchBot is crawling your pages. Perplexity acts independently, but ChatGPT stays tethered to Bing.

Does JavaScript content get read by AI models?

Client-side JavaScript remains a visibility risk for AI models. Most models fetch server-rendered HTML, so critical content should not depend on JavaScript to render.

Pre-rendering or server-side rendering ensures your content is available to all AI crawlers. If a model must execute scripts to read your text, it will likely move to a competitor's page.

How many sources does each AI model cite per answer?

ChatGPT cites five to eight sources with sidebar links. Perplexity shows four to ten inline footnotes with expandable snippets. Gemini provides character-level source mapping.

Claude Code does not provide web citations. Claude.ai uses encrypted snippet references. Each platform handles attribution differently, changing how users verify information.

Can I block specific AI models from reading my site?

Yes. Block OAI-SearchBot for ChatGPT, PerplexityBot for Perplexity, and Googlebot for Gemini-powered AI Overviews. Each model respects its own crawler directives.

Blocking crawlers also removes you from those models' citations. Consider the trade-off carefully, protecting your data means sacrificing brand visibility in AI search.

Conclusion

Each AI model runs a different content pipeline with distinct retrieval, filtering, and citation methods. There is no one-size-fits-all AI optimization, but five universal tactics work across every model.

- Claude strips schemas, meta tags, and paraphrases your content through a smaller model

- ChatGPT depends on Bing's index, skip Bing Webmaster Tools and you're invisible

- Perplexity offers the most direct pipeline with live fetching and inline citations

- Gemini's character-level citation mapping is the most precise system available

- Clean HTML, strong headings, and front-loaded answers work across all five models

Start here: Audit whether your site is indexed in both Google and Bing. Submit to Bing Webmaster Tools if you haven't. This single step closes the content quality gap in AI search.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

AI Search Prompt Optimization: Why Buyers Tell AI More Than Google

AI search prompt optimization starts with understanding the 17x word gap. Learn why buyers share more with AI…

How AI Engines Choose Sources: 8-Factor Framework

How AI engines choose sources to cite: 8 weighted factors from domain authority to entity density, plus platfo…

Google AI Mode Link Updates: What Five Changes Mean for Your Brand's AI Visibility

Google AI Mode link updates add five new outbound link features. We analyze what changed on May 6, how each up…