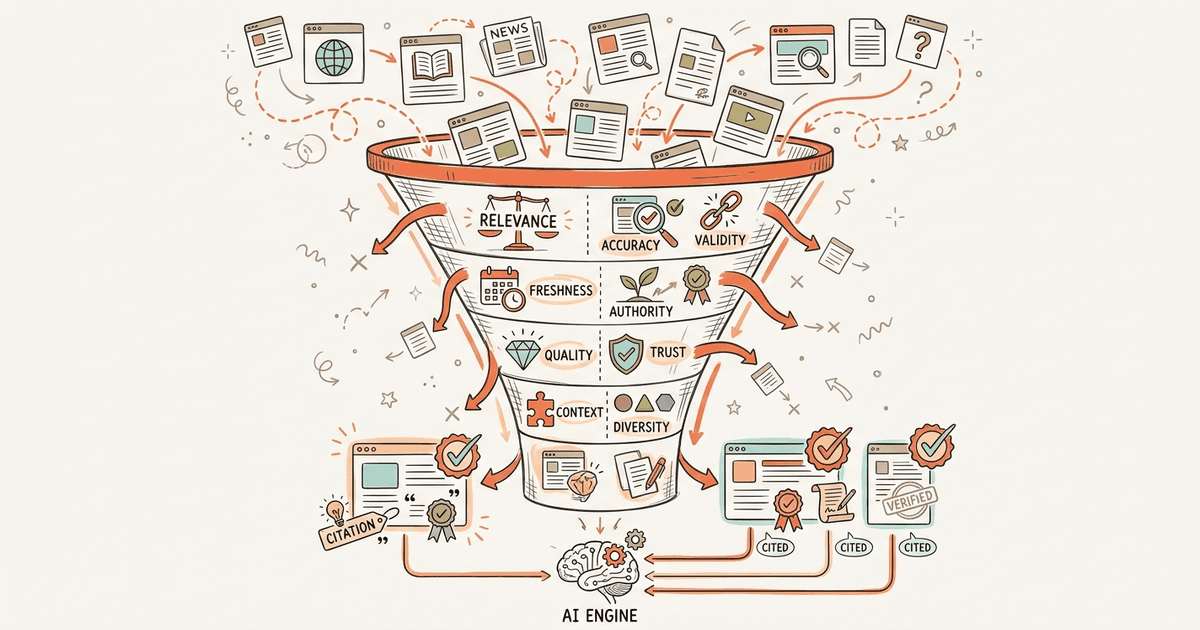

How AI Engines Choose Sources: 8-Factor Framework

How AI engines choose sources to cite: 8 weighted factors from domain authority to entity density, plus platform-specific differences across ChatGPT, Perplexity, and AI Overviews.

TL;DR

AI engines retrieve 50+ candidate pages per query but cite only 3-8. Getting found is step one. Getting selected is the game. We synthesized data from 3.25 billion citations into an 8-factor weighted framework covering domain authority, answer-first structure, entity density, content freshness, and four more factors. Only 11% of domains overlap between ChatGPT and Perplexity, so optimization must be platform-specific.

75% of domains cited by GPT-5.4 appear in neither Google nor Bing results. The AI citation index is a separate world, and your Google ranking does not guarantee a seat at the table.

Most brands solve the wrong problem. They focus on getting retrieved (technical access) and stop there. But retrieval is just step one. AI engines pull dozens of candidate pages per query, then pick a handful to actually cite. The selection criteria look nothing like traditional search ranking factors.

We synthesized data from Profound's 3.25 billion citation study, Passionfruit's model comparison analysis, GEOL.ai's intervention case study, and the Princeton GEO paper into an 8-factor weighted framework for citation selection, plus a platform comparison showing how ChatGPT, Perplexity, and AI Overviews select differently.

What Is the Two-Stage AI Citation Pipeline?

AI citation works in two stages: retrieval (can the engine find your page?) and selection (does the engine choose to cite it over 50 other candidates?).

This distinction separates visible brands from invisible ones. Retrieval is the baseline technical requirement. It involves robots.txt access, rendering speed, and crawl budget. You can learn exactly how AI models read your website to secure this foundational step.

Selection dictates actual visibility. Once retrieved, the engine evaluates content against eight factors to decide which sources to cite. This competitive phase is where most brands fail.

Consider the numbers. 47% of ChatGPT-cited domains rank on Google, meaning 53% don't. For GPT-5.4 thinking mode, 75% of cited domains appear in neither Google nor Bing. The AI citation index operates as a completely separate world from traditional search engine optimization.

So what makes an AI engine pick Source A over Source B? Here are the 8 factors, weighted by evidence strength.

What Are the 8 Factors That Determine AI Citation Selection?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

Eight factors determine AI citation selection: domain authority, answer-first structure, entity density, content freshness, provenance signals, structured data, consensus positioning, and statistical evidence.

The selection phase is not random. It follows distinct patterns across billions of queries. We broke down the data into a hierarchical framework based on empirical evidence strength.

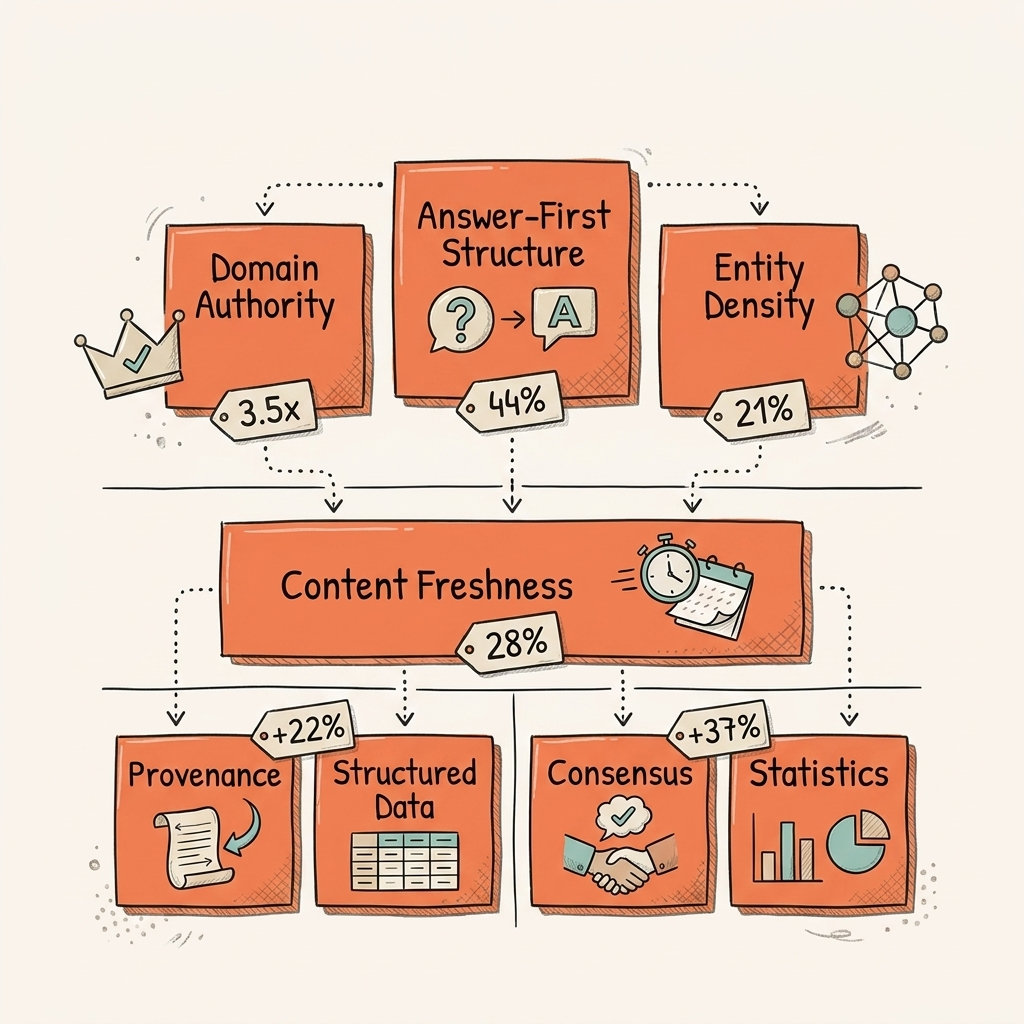

Factor 1: Domain Authority and Third-Party Validation (Weight: High)

Sites with 32,000+ referring domains are 3.5x more likely to be cited than those under 200. Brand search volume holds a 0.334 correlation with citation frequency, making it the strongest single predictor identified so far.

Third-party validation extends beyond links. Profiles on G2, Capterra, Trustpilot, and Yelp increase citation probability by 3x. Earning backlinks for AI visibility requires building broad brand authority, not just raw link counts. The engine looks for distributed trust signals across the web.

Factor 2: Front-Loaded, Answer-First Structure (Weight: High)

44.2% of citations come from the first 30% of content. Models do not read like humans. They extract information from the top of the page first. Winning content averaged a Flesch-Kincaid reading grade of 16 compared to 19.1 for lower performers.

AI engines isolate answer chunks from early content. If your best material sits at the bottom of the page, it rarely gets cited. Understanding the content quality gap in AI search shows why front-loading answers is non-negotiable.

Factor 3: Entity Density and Clarity (Weight: High)

Heavily cited text averages a 20.6% entity density. This metric measures the percentage of named entities (people, products, organizations, specific concepts) versus filler words. GEOL.ai improved a test site's citation rate from 9% to 18% purely through entity standardization.

Optimizing entity SEO for AI search creates a clear map for the model. High entity density removes ambiguity and replaces pronouns and generic nouns with concrete referents. The engine cites sources that provide the highest concentration of unambiguous facts.

Factor 4: Content Freshness (Weight: Medium-High)

Pages updated within two months earn 28% more AI citations than older content. The data reveals a harsh 3-month citation cliff where visibility drops sharply after 90 days. On top of that, 40-60% of cited sources rotate monthly.

This velocity makes AI search fundamentally different from traditional organic search. Managing AI citation decay requires continuous content updates. Evergreen content no longer holds static positions for years. The engines actively seek the most recent validation of facts.

Factor 5: Provenance Signals (Weight: Medium)

Named authors, visible publication dates, and outbound references to primary sources all matter. GEOL.ai found that provenance signals increased selection likelihood significantly when paired with entity clarity.

These elements function as the AI version of E-E-A-T. Models look for structural proof of human authorship and editorial standards. Anonymous content without dates triggers quality filters. Outbound links to original research prove the content is grounded in verifiable sources.

Factor 6: Structured Data (Weight: Medium)

JSON-LD implementations reinforcing canonical identity help the model categorize content. Article, Organization, Person, and FAQPage schema clarify the page's purpose. But structured data alone cannot compensate for weak editorial quality.

Think of it as an amplifier, not a standalone fix. Using schema markup for AI visibility ensures the model correctly parses your entities. It translates on-page text into machine-readable formats that reduce ambiguity during retrieval.

Factor 7: Consensus Positioning (Weight: Medium)

Small pools of 3-8 domains dominate repeatedly per topic. Single pages rarely win isolated queries consistently. Multi-platform presence increases citation probability. Brands present on G2, Capterra, Trustpilot, and Yelp get cited 3x more.

This is consensus stacking. You need to be in the cluster of repeatedly cited domains. A comprehensive approach to answer engine optimization requires reputation reinforcement across platforms. The model looks for agreement among trusted sources.

Factor 8: Statistics and Quotations (Weight: Medium)

Including statistics increases AI visibility by 22%. Using quotations boosts citation likelihood by 37%. The Princeton GEO paper (KDD 2024, 10,000 queries across 9 sources) proved that large language models strongly favor quantitative evidence and extractable quotes.

You supply the raw materials the engine uses to build its answers. More AI search statistics support this finding across multiple model generations.

| Factor | Evidence Strength | Key Metric | Deep-Dive |

|---|---|---|---|

| Domain Authority | Strong | 3.5x at 32K+ referring domains | Backlinks for AI |

| Answer-First Structure | Strong | 44.2% from first 30% of content | Content Quality Gap |

| Entity Density | Strong | 20.6% average in cited text | Entity SEO |

| Content Freshness | Strong | 28% more within 2 months | Citation Decay |

| Provenance Signals | Moderate | 2x improvement (GEOL.ai) | E-E-A-T for AI |

| Structured Data | Moderate | Amplifier, not standalone | Schema Markup |

| Consensus Positioning | Moderate | 3-8 domains dominate per topic | Answer Engine Optimization |

| Statistics & Quotations | Moderate | +22% stats, +37% quotations | AI Search Statistics |

These 8 factors don't apply equally across platforms. ChatGPT, Perplexity, and AI Overviews each weight them differently.

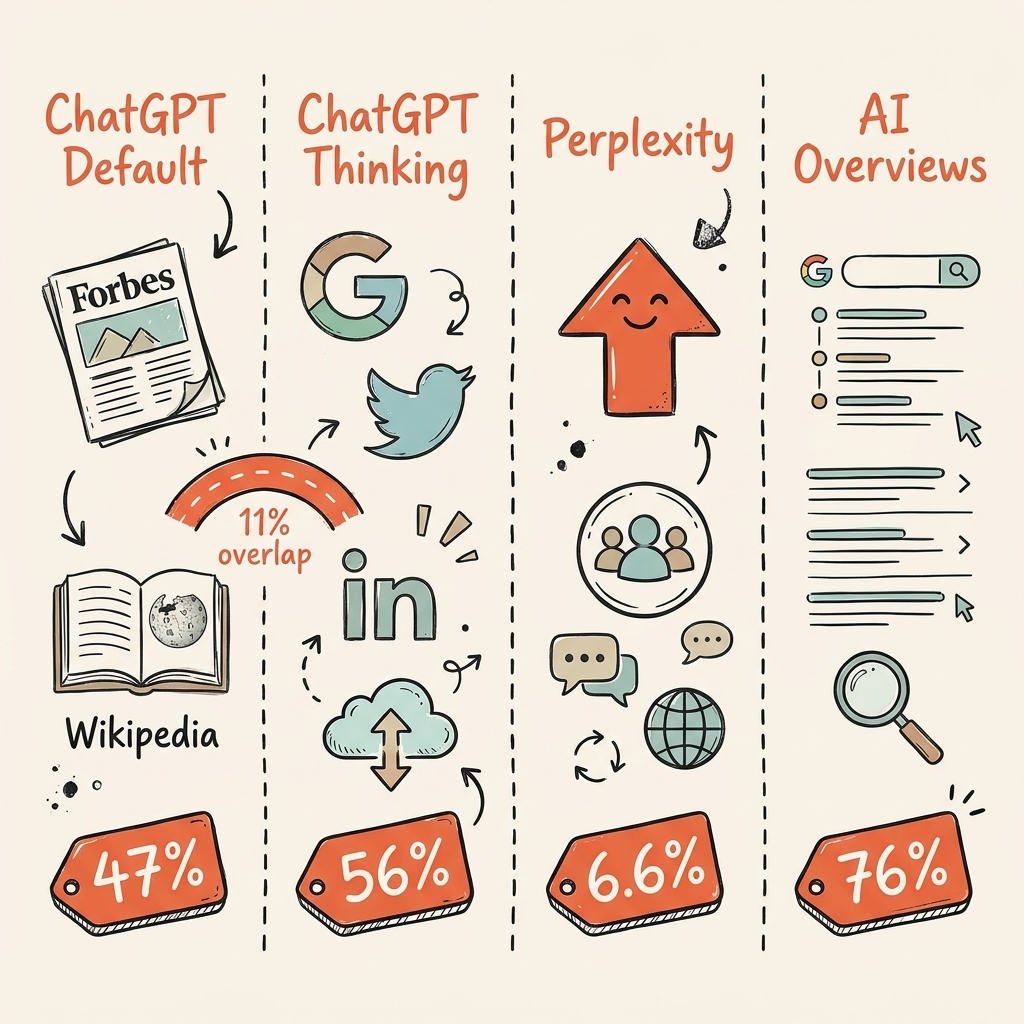

How Do Different AI Platforms Select Sources?

Each AI platform selects sources differently. Only 11% of cited domains appear in both ChatGPT and Perplexity, making platform-specific optimization essential.

The AI search landscape is fragmented. Strategies that dominate one platform often fail on another. You need to tailor your approach based on the specific engine's architecture and retrieval behavior.

ChatGPT Default (GPT-5.3)

Relies on "kingmaker" intermediaries. Forbes, TechRadar, and Reddit account for its top citations. It trusts aggregators over individual brands. Currently, 47% of its cited domains rank on Google. Wikipedia alone constitutes 47.9% of its top-10 citations.

ChatGPT Thinking Mode (GPT-5.4)

Completely different behavior from the same platform. It runs 8.5 queries per prompt and cites brand websites 56% of the time compared to just 8% in default mode. 75% of domains cited by this mode do not appear in Google or Bing. The thinking mode conducts deep, independent research.

Perplexity

Operates via real-time web crawling with transparent citations. It boasts the highest Reddit citation rate at 6.6%, compared to ChatGPT's 1.8%. Perplexity places much stronger emphasis on community content and recent discussions.

Google AI Overviews

Maintains the strongest correlation with traditional search. 76.1% of its cited URLs rank in Google's top 10. It shows the highest social citation rate at 15.3%, with Reddit making up 21% of those social citations. Mastering AI Overviews optimization requires maintaining strong traditional rankings.

Cross-Platform Overlap

Only 11% of domains are cited by both ChatGPT and Perplexity. Optimization must be platform-aware. A single unified strategy will leave you invisible on most engines.

| Behavior | ChatGPT Default | ChatGPT Thinking | Perplexity | Google AI Overviews |

|---|---|---|---|---|

| Queries per prompt | 1 | 8.5 | Real-time crawl | Google index |

| Brand website citation | 8% | 56% | Varies | 76.1% from top 10 |

| Top source type | Wikipedia/Forbes | Brand sites | Community + authority | Google-ranked pages |

| Reddit citation share | 1.8% | Lower | 6.6% | 2.2% (21% of social) |

| Google ranking correlation | 47% | 25% | Lower | 76.1% |

Why Does the Retrieval vs. Selection Distinction Matter?

Retrieval is technical access: robots.txt, rendering speed, crawl budget. Selection is citation-worthiness. Most brands solve retrieval but ignore the 8 selection factors entirely.

Retrieval operates as a binary condition. Either the engine can access your page, or it cannot. Selection functions as a competitive process where your page competes against 50+ candidates on every query.

If your robots.txt file blocks AI crawlers, no amount of content optimization will help. But if you are fully accessible and still lack citations, the problem is selection. We cover the retrieval side in depth in our guide on how AI models read websites.

The two-stage model explains why brands with perfect technical SEO still get zero AI citations. They optimized the wrong stage. Separate these workflows: technical teams guarantee access, content teams engineer citation-worthiness.

How Do You Audit Your AI Citation Position?

Audit your citation position by scoring each of the 8 selection factors and identifying the weakest links between retrieval access and citation-worthiness.

You need a systematic approach rather than guesswork. Grade your content against the specific selection criteria to pinpoint exactly where you are losing visibility.

- Retrieval check: Are AI crawlers (GPTBot, PerplexityBot, Google-Extended) explicitly allowed in your robots.txt? Can your pages render without JavaScript?

- Authority check: How many referring domains do you have? Is your brand search volume growing? Are you listed on G2, Capterra, or Trustpilot?

- Structure check: Does your content answer core questions in the first 30%? Are answer capsules under 30 words?

- Entity check: What percentage of your text consists of named entities versus filler? Are entity names consistent across all pages?

- Freshness check: When was your top content last updated? Is anything older than 3 months?

- Provenance check: Do pages feature named authors, visible dates, and outbound citations to primary sources?

- Schema check: Is JSON-LD implemented for Article, Organization, Person, and FAQ?

- Consensus check: Do you appear in the cluster of 3-8 domains repeatedly cited for your core topic?

These questions are a starting point. For a full picture, test actual AI prompts in your category and track which domains appear in the citations.

Frequently Asked Questions About How AI Engines Choose Sources

These are the most common questions we hear from brands trying to understand how AI engines choose sources and citation mechanics.

Does Google Ranking Help with AI Citations?

It depends on the platform. Google AI Overviews pulls 76.1% from its top 10, but only 47% of ChatGPT citations come from Google-ranked domains, and for GPT-5.4 thinking mode, that drops to 25%.

Do not assume Google ranking translates to AI citation automatically. The algorithms optimize for completely different retrieval goals. Test your target queries directly in ChatGPT and Perplexity to see which domains actually win.

How Often Do AI Engines Update Their Source Preferences?

Frequently. 40-60% of cited sources rotate monthly, and content older than 3 months sees sharp citation drops.

This makes content freshness a hard requirement. Revisit your top-performing pages every 60 days to update statistics, verify links, and refresh the publication date.

Can Structured Data Alone Improve AI Citations?

Structured data amplifies existing quality signals but cannot compensate for weak content. GEOL.ai found it works best alongside entity clarity and provenance improvements.

Think of schema as a multiplier. If the content lacks substance, the impact is zero. Our guide on schema markup for AI visibility covers the technical implementation once your content meets the quality threshold.

Why Does the Same Query Cite Different Sources Each Time?

AI models use probabilistic generation, and the fan-out queries vary per session. Only 27% of fan-out keywords stay consistent across searches.

This variability explains why consensus positioning matters. Being in the repeatedly cited cluster provides citation stability. You win by becoming an unavoidable authority on the topic, not by ranking for a single phrase.

What Is the Fastest Way to Improve AI Citation Rates?

Start with answer-first structure and entity density. They show the strongest evidence and don't require building new backlinks or domain authority.

Structure and entity fixes can be implemented in a week. Rewrite introductions, remove filler words, and state answers clearly before attempting any off-page optimization. Authority takes months. Content structure takes days.

Conclusion

The gap between retrieved and cited is where AI visibility is won or lost. Here are the four things that matter most:

- Retrieval is binary. Selection is competitive. Brands that conflate the two waste resources solving the wrong problem.

- Domain authority and answer-first structure carry the strongest evidence. Structured data and consensus positioning are emerging factors with growing support.

- Each platform selects differently. Only 11% of domains overlap between ChatGPT and Perplexity. Platform-specific optimization is not optional.

- The 8-factor framework replaces guesswork. Score yourself against each factor, find the weakest link, and fix it first.

Pick your lowest-scoring factor from the self-assessment above and read the corresponding deep-dive linked in the factor table. This article is the map. The deep-dives are the territory. We built both so you can stop guessing and start engineering your AI citations.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

Google AI Mode Link Updates: What Five Changes Mean for Your Brand's AI Visibility

Google AI Mode link updates add five new outbound link features. We analyze what changed on May 6, how each up…

AI Visibility for Automotive: The Two-Layer Problem Dealerships Don't See

AI visibility for automotive is a two-layer problem. 84% of dealership websites are invisible to AI. Learn the…

How to Fix Wrong AI Answers Brand Owners Get (2026 Guide)

Fix wrong AI answers brand owners encounter with this 6-step playbook. Learn why ChatGPT, Perplexity, and AI M…