AI Search Prompt Optimization: Why Buyers Tell AI More Than Google

AI search prompt optimization starts with understanding the 17x word gap. Learn why buyers share more with AI engines and how to optimize content for prompt-based discovery.

TL;DR

The average ChatGPT prompt is 60 words. Google searches average 3.4. That 17x gap means buyers share budgets, constraints, and context with AI that they'd never type into Google. Brands that optimize for this prompt behavior, not just keywords, win citations. This guide covers the psychology behind the shift, prompt anatomy, platform differences, and a practical framework for prompt-based content optimization.

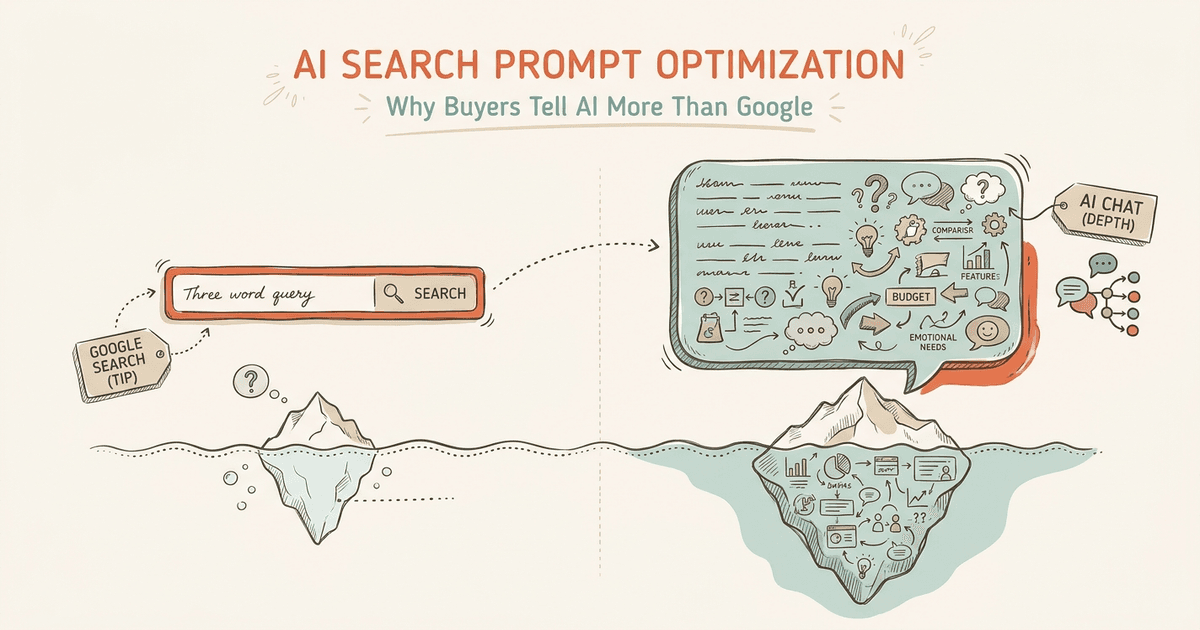

The average ChatGPT prompt is 60 words. The average Google search is 3.4. That 17x word gap isn't a format difference. It's a window into buyer intent that keyword research has never captured.

Today, 35% of US consumers use AI for product discovery, compared to 13.6% on traditional search. Yet most brands still optimize for short queries, missing the detailed prompts where real purchase decisions form.

This guide covers why buyers prompt differently, how prompt anatomy maps to content strategy, how platforms diverge, and a practical framework for mining and optimizing for real buyer prompts.

We track 100 to 200 prompts across 7 AI engines for dozens of brands. The patterns are clear. Winning the citation starts with understanding the prompt, not the keyword.

Why Do Buyers Talk to AI Differently Than Google?

Buyers share more with AI because conversational interfaces lower the disclosure barrier. People naturally include budgets, constraints, and context they'd never type into a search bar.

AI chat interfaces feel like talking to an advisor, not querying a database. A buyer won't Google "CRM under $50/seat for 20-person SaaS migrating from Salesforce." But they type exactly that into ChatGPT without hesitation. The chat box invites complexity. The search bar demands brevity.

Understanding what AI visibility actually means starts with recognizing this behavior change. Constraint disclosure is the new norm. Buyers freely include budget limits, team sizes, past experiences, and technical requirements. Traditional Google searches strip all of this context out. Users learned over two decades that specificity doesn't help on Google. AI inverts this entirely.

AI prompts map to decision stages, not search volume. A prompt like "Should I switch from HubSpot to Pipedrive if my team does mainly outbound?" reveals an exact position in the buyer journey. These prompts encode where the buyer sits in the funnel.

The intent breakdown backs this up. 93.7% of ChatGPT searches are informational. Only 0.1% are transactional. But that thin transactional slice carries outsized per-query value because each prompt contains full purchase context. Even informational prompts contain strong purchase signals. Buyers tell AI what they want, why they want it, and what stops them from buying today.

Understanding why buyers share more gives you the baseline. The next question: what does a buyer prompt look like when you break it apart?

What Does Prompt Anatomy Look Like Across Industries?

See where you rank across all AI answer engines.

Enter your domain and we'll scan your citation rate across ChatGPT, Perplexity, and Google AI.

Prefer to talk? Book a free 30-min call

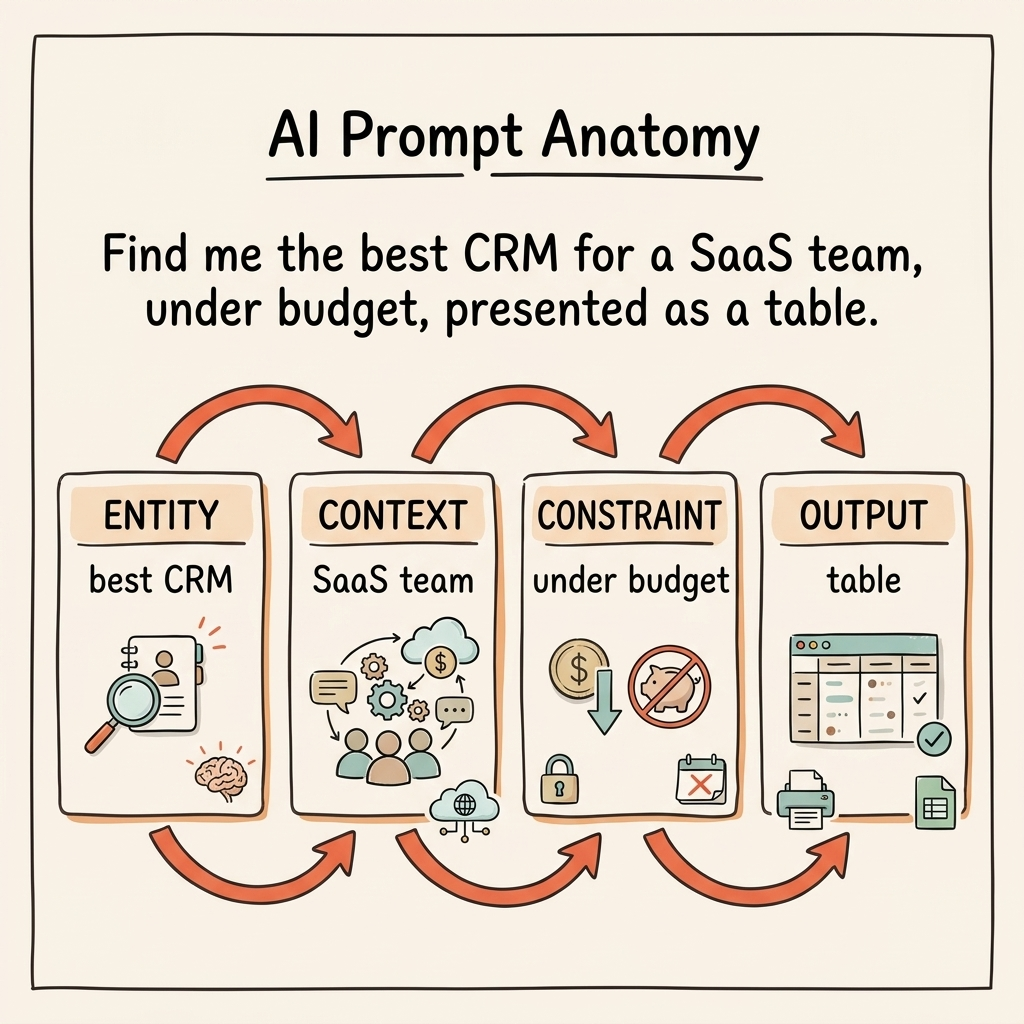

AI prompts follow four patterns: entity, context, constraint, and output. Each pattern requires a different content format to earn the citation.

The four-part anatomy is predictable. It consists of a specific entity, situational context, defining constraints, and a desired output format. A real software query looks like this: "best CRM [entity] for a 20-person SaaS company [context] with Salesforce migration needs under $50/seat [constraints], give me a comparison table [desired output]." Miss one element, and the AI engine skips your content.

Understanding how AI engines choose sources relies on mapping your content to this anatomy. We see four main prompt types across industries, and each dictates how you build your pages.

Comparison prompts ask "X vs Y for [specific use case]." In SaaS: "HubSpot vs Salesforce for a startup." In ecommerce: "Shopify vs WooCommerce for a physical retail hybrid." In professional services: "Deloitte vs McKinsey for supply chain consulting." These require structured, side-by-side comparison content.

Recommendation prompts ask "What should I use for [problem]." A SaaS buyer wants "What email tool is best for cold outreach." A shopper asks "What running shoe is best for flat feet under $100." A services buyer needs "What type of lawyer for trademark infringement." The AI looks for curated, ranked lists with clear criteria.

Validation prompts read "Is [brand] good for [need]." For SaaS: "Is Monday.com good for agile sprints." Ecommerce: "Is the Dyson Airwrap worth it for fine hair." Services: "Is LegalZoom good enough for LLC formation." You need real metrics and strong E-E-A-T signals here.

Exploration prompts ask "How do I [goal] with [constraint]." SaaS: "How do I migrate from Mailchimp to Klaviyo with 50k subscribers." Ecommerce: "How do I style a midi skirt for a petite frame." Services: "How do I prepare for a tax audit as a freelancer." The AI seeks sequential, logical steps.

| Prompt Type | Example Pattern | Best Content Format | Key Structural Elements |

|---|---|---|---|

| Comparison | "X vs Y for [use case]" | Side-by-side comparison page | Feature table, use-case criteria, verdict |

| Recommendation | "What should I use for [problem]" | Curated guide with criteria | Criteria framework, ranked options, tradeoffs |

| Validation | "Is [brand] good for [need]" | In-depth review / case study | Real metrics, E-E-A-T signals, alternatives |

| Exploration | "How do I [goal] with [constraint]" | Step-by-step guide | Sequential steps, constraint-aware advice |

These prompt patterns hold across verticals. But they surface differently depending on which AI platform the buyer uses.

How Does the Same Prompt Behave Differently Across AI Platforms?

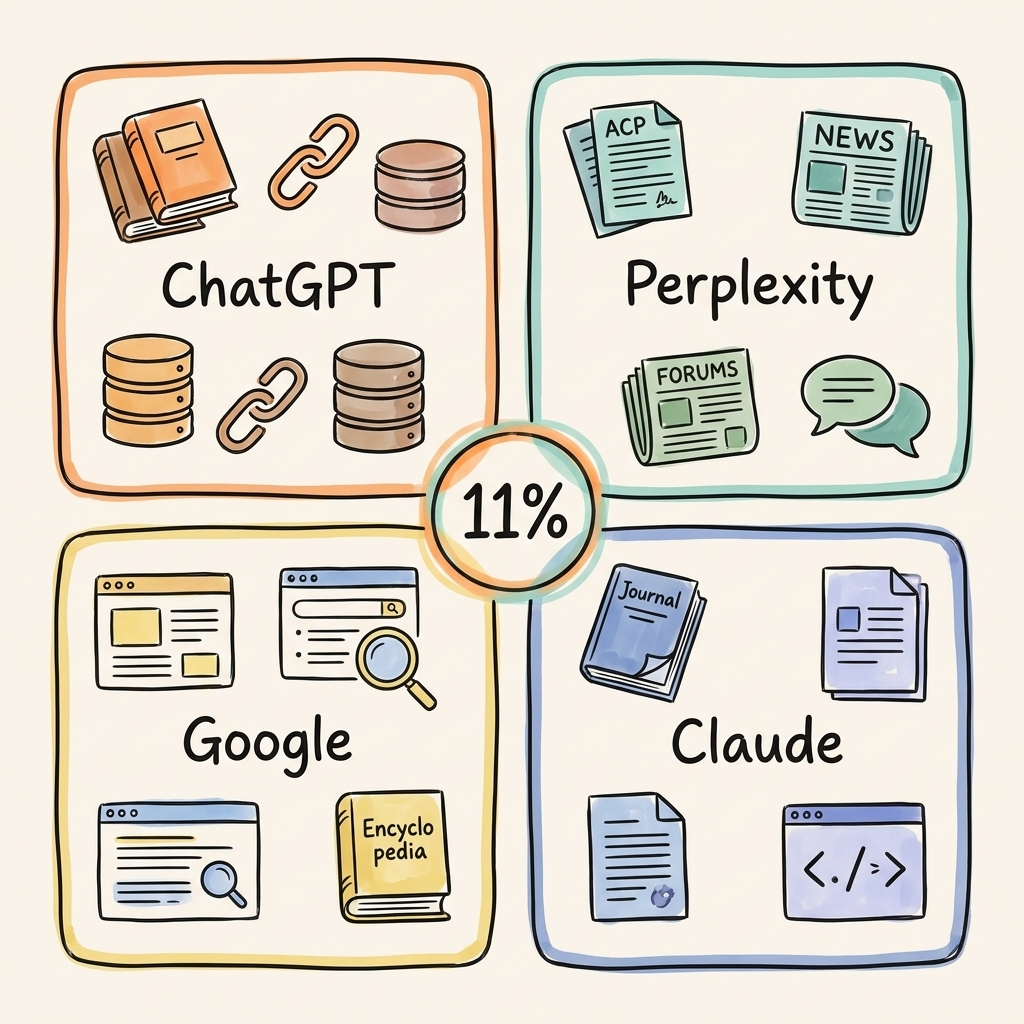

Only 11% of domains cited by ChatGPT also appear in Perplexity results. A single-platform AI search prompt optimization strategy leaves 89% of the landscape uncovered.

This divergence stems from architectural differences. Each platform uses different search indexes and authority signals. We analyzed these patterns across 680M citations. The results make one thing clear: you cannot build one page and expect it to work across every engine.

ChatGPT is conversational and multi-turn. It relies on the Bing index plus its training data. It heavily favors Wikipedia (47.9% of citations) and established authority domains. Users ask longer, more nuanced prompts here. Adapting for this platform requires a specific approach, which we cover in our guide on how to get recommended by ChatGPT.

Perplexity operates differently. It's the closest to traditional search but delivers real-time citations. It favors Reddit (46.7% of citations) and prioritizes fresh content over older pages. It has the highest citation density per response at 21.87 on average. This changes everything about how to get cited by Perplexity.

Google AI Mode blends both paradigms. It stays keyword-influenced but expands toward natural language. It draws from Google's own index, so it rewards brands already ranking in traditional search.

Claude handles longer, analytical prompts. It prefers structured content over casual prose and uses Brave Search for retrieval. It's less citation-heavy and more synthesis-focused than its peers.

Knowing how platforms differ matters. But the real competitive advantage comes from knowing what your actual buyers ask these platforms.

How Do You Move From Keyword Research to Prompt Research?

The best prompt research comes from your own customers. Sales call transcripts, support tickets, and chat logs contain the exact language buyers use when asking AI for recommendations.

Mine internal sources first. Sales calls, support tickets, chat logs, and surveys contain the natural-language questions your buyers ask daily. These are the same questions they type into AI engines. Most brands sit on this data and don't use it. Converting sales calls to content fixes this gap.

Map those prompts to content gaps. For each buyer question, ask: does your content actually answer it? Don't check if the page mentions the keyword. Check if it answers the full question with the context a buyer would include.

Here's a concrete example. A buyer asks: "What's the best payroll software for a 50-person agency using Gusto but needing better international contractor support?" A keyword-optimized page targeting "best payroll software" fails this prompt entirely. A prompt-optimized page addresses international contractor support, compares Gusto to alternatives, and solves the specific problem. The gap between these two pages is the gap between being cited and being invisible.

Run a prompt-content fit audit. Check whether your content structure matches how AI engines break prompts into retrievable chunks. 44.2% of citations come from the first 30% of content. If your best answer sits in paragraph eight, AI won't find it. This is a structural problem, not a quality problem. It's a core part of the content quality gap in AI search.

Ongoing prompt discovery isn't optional. Track what buyers ask across AI platforms consistently. Prompt language evolves as AI interfaces mature and buyer behavior shifts. The brands doing this well track 100 to 200 prompts across platforms on an ongoing basis.

Once you know what buyers ask, the next step is making your content structurally optimized to earn the citation.

How Should You Optimize Content for Prompt-Based Discovery?

Prompt-optimized content leads with direct answers, maintains high entity density, matches the constraint language buyers use, and structures information for AI extraction.

Start with answer-first architecture. 44.2% of AI citations pull from the first 30% of content. Lead every section with a direct answer. State the answer, provide evidence, add nuance, then move to the next question. Don't waste space on long introductions.

Entity density determines visibility. Highly cited content averages 20.6% entity density, nearly 3x typical English text. Name specific brands, tools, people, and products. AI models skip vague language. Instead of "a leading solution," write "Cintra's prompt tracking across 7 AI engines." Models need specific nouns to match against their knowledge graphs. This is core to how AI models read websites.

Constraint matching is critical. Include the exact language buyers use: budget ranges, team sizes, use cases, technical requirements. If a buyer asks for "CRM under $50/seat for a remote team," your content needs "$50/seat" and "remote team" to trigger a match. Map the four-part prompt anatomy directly to your content elements.

Understand the zero-sum dynamic. AI gives one answer, not ten links. If your competitor occupies that answer, you don't exist. This isn't a ranking game. It's a winner-takes-all citation game.

The data supports this approach. GEO-optimized content saw a 47% uplift in citation frequency in controlled academic studies (Princeton, Georgia Tech, Allen Institute for AI). And with only 7.4% of Fortune 500 companies having implemented llms.txt, the opportunity for early movers remains wide open. Answer engine optimization principles guide the execution.

Frequently Asked Questions About AI Search Prompt Optimization

These are the questions we hear most from brands exploring AI search prompt optimization.

How do I find what prompts people use about my brand?

Start with your own data. Sales call transcripts, support tickets, and chat logs contain the natural-language questions buyers type into AI. Tools exist for tracking AI mentions, but the richest source is always internal. Track 100 to 200 prompts across 7 engines to see where your brand appears and where it doesn't.

Should I optimize for keywords or prompts?

Both. Keywords still drive traditional search traffic. Prompts capture the contextual purchase intent that AI engines use to generate recommendations. The shift isn't replacement, it's expansion. Keyword research tells you what buyers search for. Prompt research tells you what they actually need. The best content strategy covers both. Our AI search statistics page has more supporting data.

How does prompt length affect which content gets cited?

Longer prompts contain more constraints. AI engines need more specific, structured content to match them. Generic pages get filtered out. A 60-word prompt might specify industry, team size, budget, use case, and desired output format. Content addressing only one dimension loses to content addressing all five.

Can I track AI prompt performance?

Yes. Prompt tracking monitors which buyer questions trigger your brand across AI platforms and which miss you entirely. We track brand citations across ChatGPT, Perplexity, Google AI Overviews, Claude, Grok, and Reddit to map visibility gaps and rebuild content around them.

How is prompt optimization different from regular AEO?

AEO optimizes content structure for AI retrieval. AI search prompt optimization goes upstream: it optimizes for the specific language and intent patterns buyers use when asking AI. Think of it as the research layer that feeds AEO execution. You need both. Learn more about the broader framework in our answer engine optimization guide.

Conclusion

The 17x word gap between AI prompts and Google searches isn't a quirk. It's a structural shift in how purchase intent surfaces.

Prompt anatomy follows consistent patterns (entity + context + constraint + output) that map directly to content format requirements. Each AI platform cites differently, with only 11% domain overlap between ChatGPT and Perplexity. And the best prompt intelligence comes from your own customers, not tools alone.

Your next step: Pull 10 recent sales call transcripts. Highlight every question a prospect asked. Those questions are the prompts buyers type into AI right now.

See where your brand appears in AI search

Scan ChatGPT, Perplexity, and Google AI across buyer-intent queries, instantly, no sign-up.

Find out if AI is sending buyers to your competitors.

We audit your AI visibility across ChatGPT, Perplexity, and Google AI –and show you exactly where you rank and what to fix.

“We went from 200 visitors/day to 1,900 visitors/day and 40% of demos are from AI search.”

Sumanyu Sharma · CEO, Hamming.ai

“Cintra helped me go from 3k to 7.5k daily traffic and doubled weekly orders in 1.5 months.”

Russ Coulon · Owner, UV Blocker

“We saw a lift from 3% to 13% visibility in the first 2 weeks, and organic traffic hit its highest ever.”

Ash Metry · Founder, Keywords.am

Related Articles

How AI Engines Choose Sources: 8-Factor Framework

How AI engines choose sources to cite: 8 weighted factors from domain authority to entity density, plus platfo…

Google AI Mode Link Updates: What Five Changes Mean for Your Brand's AI Visibility

Google AI Mode link updates add five new outbound link features. We analyze what changed on May 6, how each up…

AI Visibility for Automotive: The Two-Layer Problem Dealerships Don't See

AI visibility for automotive is a two-layer problem. 84% of dealership websites are invisible to AI. Learn the…